HPE has expanded its Nvidia-based AI portfolio with new techniques constructed on Blackwell and upcoming Rubin GPUs, alongside updates to its Alletra Storage MP X10000, which it claims is the primary object storage platform to realize Nvidia-Licensed Storage validation.

The corporate can be asserting new Nvidia-powered AI Manufacturing facility and Supercomputing ranges, which embrace AI grids and allow so-called sovereign AI in Europe and the US.

HPE president and CEO Antonio Neri stated: “The AI race is basically about velocity, scale, and belief. Our trade management throughout cloud, networking, and AI allows organizations to operationalize AI securely, effectively, and at an unprecedented scale. Along with Nvidia, HPE delivers turnkey AI factories and networks that rework AI ambitions into actual enterprise worth.”

Sovereignty push

HPE famous that it’s constructing and putting in the supercomputer for the European Union AI Manufacturing facility, HammerHAI (Hybrid and Superior Machine studying platform for Manufacturing, Engineering, and Analysis). A consortium of main tutorial HPC facilities in Germany will lead this effort. It stated its work would permit orgs to “scale AI initiatives” whereas “adhering to regional information sovereignty and compliance necessities.”

Dr Bastian Koller, Managing Director of the Excessive Efficiency Computing Heart at Stuttgart College and lead coordinator of HammerHAI stated of the partnership: “HammerHAI will provide a highly-performant AI platform, alongside companies like AI expertise coaching, as a substitute for future customers which have traditionally relied on industrial cloud AI companies by which information sovereignty was tough to make sure.”

Dr Koller added: “This built-in method will assist researchers, startups, and enterprises entry AI assets whereas working in alignment with European Union information safety necessities.”

Storage crunch

HPE says it is the primary vendor to realize Nvidia-Licensed Storage validation for object-based techniques on the Basis degree with the Alletra Storage MP X10000. Nvidia has validated and benchmarked the array’s efficiency for workloads of as much as 128 GPUs, performed useful checks for enterprise-grade availability and reliability, and confirms that the storage layer effectively feeds information to accelerated computing assets to ship sooner mannequin coaching, decrease latency inference, and higher total utilization.

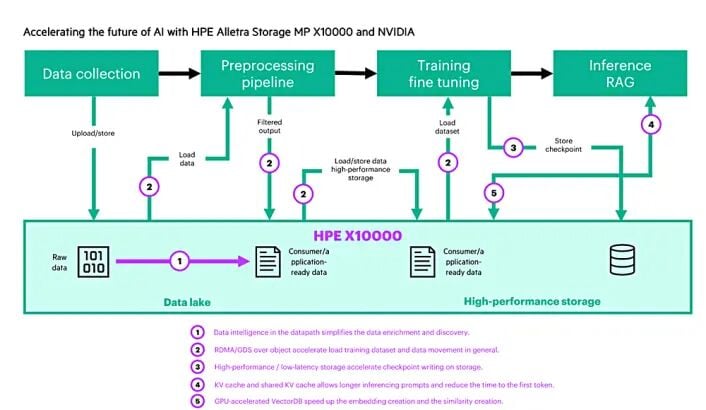

An HPE blog discusses how the Alletra Storage MP X10000 and Nvidia RDMA for S3 speed up AI pipelines, RAG, and real-time inference with low-latency, high-throughput, GPU-direct information paths. It comprises this diagram illustrating the X10000’s help for AI pipeline levels:

The X10000 is central to HPE’s storage actions in AI. It has listed terabyte-scale vector information in below an hour with the X10000, Nvidia cuVS CAGRA GPU-accelerated vector indexing, and cuObject for accelerated storage I/O. One other blog explains the way it did this, displaying a 17x enchancment in index construct time and 8x enchancment in complete end-to-end pipeline transport utilizing a single Nvidia H100 and accelerated distant direct reminiscence entry (RDMA).

HPE says that it is evolving the X10000 to centralize clever information dealing with and optimize how AI workloads ingest, course of, and ship information. The corporate can be supporting the brand new Nvidia STX rackscale reference structure to develop new AI storage choices powered by Vera Rubin accelerators, BlueField-4 DPUs, Spectrum-X networking, ConnectX NICs, and Nvidia’s AI software program.

HPE GTC information

On the supercomputing occasion, HPE additionally introduced a variety of extra enterprise and edge-focused merchandise:

- HPE is increasing HPE Non-public Cloud AI, its turnkey enterprise AI manufacturing facility co-engineered with

Nvidia, to ship larger efficiency, scalability, and adaptability for enterprise inference.

- New community growth racks so HPE Non-public Cloud AI deployments can scale as much as 128 GPUs.

- The massive HPE Non-public Cloud AI system is now obtainable in an air-gapped configuration, making certain delicate information will not be uncovered to exterior networks.

- HPE ProLiant Compute DL380a Gen12 servers and HPE Non-public Cloud AI techniques based mostly on the DL380a are being licensed for Fortanix Confidential AI, a joint answer leveraging Nvidia Confidential Computing for safe on-premises deployments of AI fashions and processing of delicate information with out publicity.

- CrowdStrike delivers agentic safety for HPE Non-public Cloud AI.

- HPE Non-public Cloud AI delivers a pre-configured {hardware} and software program stack that includes the newest Nvidia AI Enterprise software program and blueprints, together with the up to date AI‑Q blueprint for AI brokers and new Omniverse blueprint for digital twins. The newest AI-Q blueprint allows builders to construct customizable AI brokers that they personal, examine, and management.

- HPE is updating HPE Non-public Cloud AI, the newest HPE ProLiant servers and HPE AI factories to help the newest Nemotron open fashions – a part of the Nvidia Agent Toolkit – to simplify deployment of safe, on‑prem and sovereign infrastructure and rapidly ship scalable, manufacturing‑prepared outcomes.

- RTX PRO 6000 Blackwell Server Version GPUs can be found throughout all configurations of HPE’s Non-public Cloud AI and AI manufacturing facility options.

- HPE is including the brand new RTX PRO 4500 Blackwell Server Version GPU to ProLiant servers for edge deployments, small-language fashions, vector databases, and information analytics workloads.

- HPE is growing new merchandise constructed on RTX 4500 Blackwell GPUs, together with integration of the Retail Purchasing Assistant Blueprint to streamline deployment throughout the retail sector.

- HPE can be increasing the portfolio of ProLiant Compute servers that characteristic the RTX PRO 6000 Blackwell Server Version GPU.

- New Nvidia co-designed multi-workload choices simplify deployment of AI use instances for autonomous edge intelligence, retail purchasing help, video search and summarization, and biomedical analysis.

The multi-workload choices mix ProLiant Compute servers with Nvidia accelerated computing, Spectrum-X Ethernet networking, BlueField DPUs, and Join-X NICs, and in addition incorporate Nvidia’s software program, CUDA-X libraries, blueprints, confidential computing, Multi‑Occasion GPU (MIG), and digital GPU (vGPU) applied sciences with HPE chip‑to‑cloud safety and AI‑pushed automation by means of HPE Compute Ops Administration.

HPE AI Grid

HPE can be introducing an AI Grid, an end-to-end providing constructed on an Nvidia reference structure to attach AI factories and distributed inference clusters throughout regional and much‑edge websites. It allows service suppliers to deploy and function hundreds of distributed inference websites, turning AI installations right into a single clever system.

The HPE AI Grid consists of:

- Juniper’s telco-grade multicloud routing and coherent optics for predictable long-haul and metro connectivity; cloud-native and multi-tenant safety; firewalls; WAN automation; and orchestration to ship zero-touch deployment and lifecycle operations.

- ProLiant Compute edge and rack servers with Nvidia-accelerated computing, together with RTX PRO 6000 Blackwell GPUs, in addition to BlueField DPUs, Spectrum-X Ethernet switches, Join-X SuperNICs, and AI blueprints for speedy AI inference.

HPE says AI Grid lets service suppliers convert present websites with energy and connectivity into RAN‑prepared AI grids, enabling distributed inference and new companies at scale.

Availability

HPE help for RTX PRO 4500 Blackwell Server Version GPUs throughout the ProLiant Compute server portfolio will roll out in Q1 and Q2 2026.

HPE Non-public Cloud AI with air-gapped deployment, help for RTX PRO 6000 Blackwell Server Version GPUs throughout every configuration, and AI-Q and Omniverse blueprints is on the market now.

The brand new community growth racks for HPE Non-public Cloud AI for scaling as much as 128 GPUs can be obtainable in July.

The HPE and Protopia safe blueprint for reliable AI factories is deliberate for Q2 2026.

Fortanix help with ProLiant DL380a Gen12 techniques is deliberate for Q3 2026. ®

Bootnote

Nvidia’s cuVS is a library for vector search and clustering on the GPU. CAGRA is a graph-based nearest neighbor algorithm that was constructed from the bottom up for GPU acceleration. CAGRA demonstrates state-of-the-art index construct and question efficiency for each small- and large-batch sized search. ®

Source link