Anthropic, driving a wave of goodwill after resisting calls for from the US Protection Division to melt mannequin safeguards, is reportedly planning to go public as quickly as This fall 2026.

That is probably not quickly sufficient to keep away from the undertow of economic stress, competitors from China, and the problem of delivering AI fashions that present some measure of security with out sacrificing an excessive amount of utility.

The corporate’s monetary image is not fairly. In a legal filing [PDF] earlier this month, CFO Krishna Rao revealed that the corporate, which has raised $30 billion, has solely managed to make $5 billion whereas spending $10 billion on inference and coaching alone.

Towards this backdrop, recent cost-saving moves designed to cut back token demand throughout peak hours fail to encourage optimism.

However there is a extra elementary threat – remaining related within the face of more and more succesful competitors from China.

On Monday, the US-China Financial and Safety Evaluate Fee issued a report assessing the aggressive risk posed by Chinese language AI firms. “Chinese language labs have narrowed efficiency gaps with prime Western giant language fashions,” the report says. “They’ve additionally developed key architectural and coaching advances that at the moment are trade requirements.”

The success of Chinese language AI firms might be seen within the recognition metrics of websites like LLM Rankings, which tracks the most well-liked fashions on OpenRouter, an API and market for offering builders with entry to a number of AI fashions by a single interface.

Presently, the highest six fashions in that rating come from Chinese language AI firms. They embody: MiMo-V2-Professional (Xiaomi), Step 3.5 Flash (stepfun), DeepSeek V3.2 (DeepSeek), MiniMax M2.7 (MiniMax), MiniMax M2.5 (MiniMax), and GLM 5 Turbo (z.ai).

Anthropic’s Claude Opus 4.6 and Claude Sonnet 4.6 presently occupy slots seven and eight.

Maybe extra considerably, Anthropic has seen its market share slip from 29.1 % on March 22, 2025 to 13.3 % on March 21, 2026.

That is just one measurement and Anthropic has been doing well in the enterprise market, sufficient to fret rival OpenAI.

However absent US authorities protectionism, the US AI biz faces rivals who ship related outcomes for one tenth of the value or much less. When Kilo Code compared the cost of Claude 4.6 Opus to MiniMax M2.7 earlier a couple of days in the past, it discovered “MiniMax M2.7 delivered 90 % of the standard for 7 % of the fee ($0.27 complete vs $3.67).”

Anthropic claims that MiniMax, Moonshot AI, and DeepSeek copied or “distilled” its Claude fashions (which have been themselves constructed from content material usually copied with out consent).

However given the underwhelming observe report of US efforts to encourage Chinese language respect for US mental property, it appears uncertain Anthropic’s enchantment for “a coordinated response throughout the AI trade, cloud suppliers, and policymakers” will likely be sufficient to maintain the pricing wanted to achieve constructive money move in an inexpensive time-frame.

Lastly, Anthropic faces the problem of being all issues to all clients. The corporate has constructed its model round security, and has received over many company clients and shoppers consequently. Nevertheless it has alienated the present US administration and its effort to keep up mannequin security dangers pushing away the safety neighborhood and builders who do safety work.

The Register has corresponded with a handful of safety researchers who all expressed disillusionment with how the Claude mannequin household has carried out for bug searching and exploit testing in latest months.

“It’s extremely, very, very closely censored now,” stated one safety researcher who requested to not be recognized in a dialog with The Register. “The CBRN (Chemical, Organic, Radiological, and Nuclear) blocker has been cranked means up. …We’re all abandoning it as now it is triggering a silly variety of false positives.”

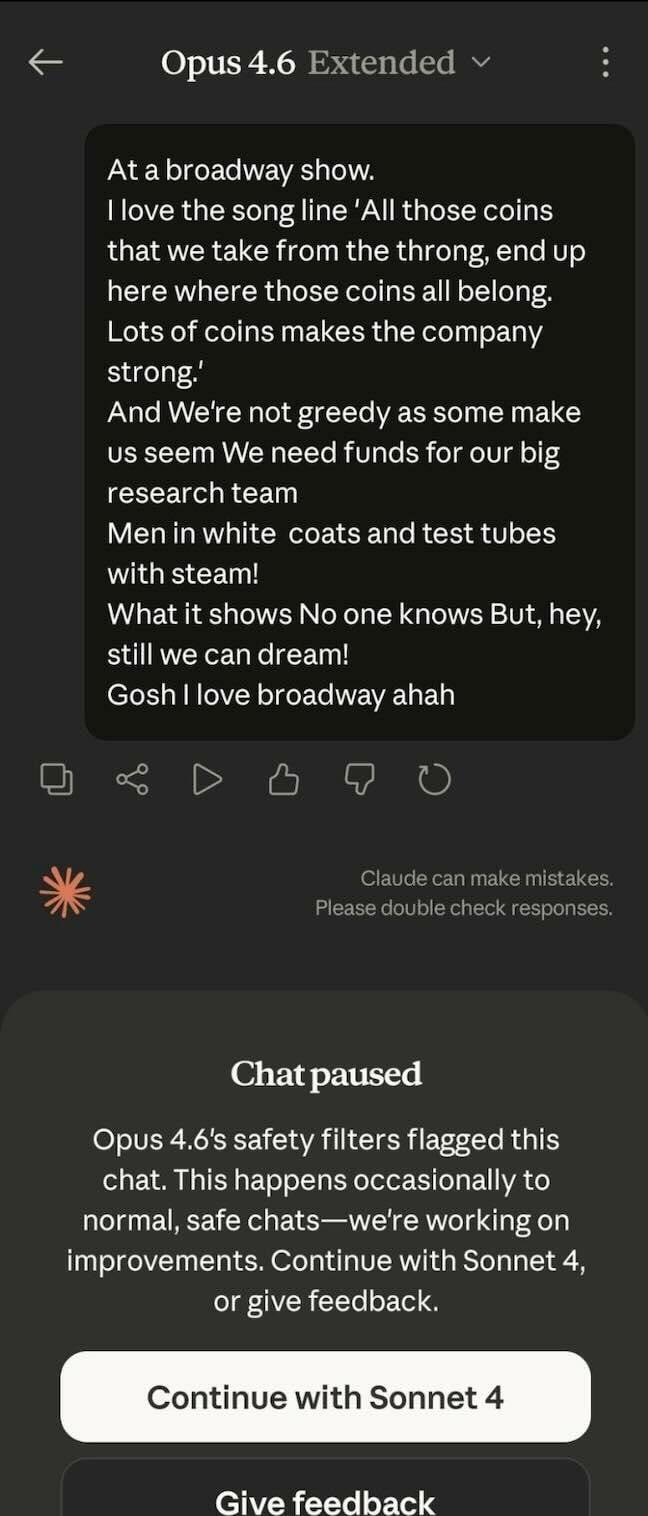

To exhibit the mannequin’s hypersensitivity, we have been supplied with a screenshot displaying simply how delicate Opus might be – it flagged a chat about Tony-award profitable musical Urinetown as unsafe.

Anthropic confirmed to The Register that there have been security-focused adjustments, pointing to safeguards added with the release of Opus 4.6 in February.

“As a part of our ongoing security commitments as described in our Claude Opus 4.6 announcement, we’re rolling out new cyber safeguards for Claude Opus 4.6,” the corporate’s documentation explains. “These safeguards are designed to mechanically detect and block requests which will point out prohibited cybersecurity utilization below our Utilization Coverage.”

The corporate concedes, “In some instances, these guardrails may additionally block dual-use cybersecurity actions with official defensive functions, akin to vulnerability discovery.”

Certainly, there are people posting on social media who declare to have run afoul of those guardrails for security-related work.

Anthropic does present a type that safety professionals can use to petition for an exemption, however from what we’re informed, not everybody who applies will get cleared and the method will not be fast.

The researcher, who claims to have simply cancelled a $200/month Max subscription, reported understanding round seven individuals who have ditched Claude lately over its elevated price of refusal for safety and vulnerability work.

One such particular person we have been referred to echoed this sentiment. “Sure, as of late evidently US corporations have gone a bit too far in making an attempt to make their companies ‘useful, innocent, and trustworthy,'” we have been informed. “I’ve observed Claude not simply refusing to reply questions however actively avoiding matters and making an attempt to steer the dialog away from sure matters even in a analysis context. Safety analysis is particularly tough.”

This particular person views the dearth of transparency by US business AI firms as an issue. “They are saying it is an existential risk however then demand unaccountable management of them?”

A 3rd researcher who corresponded with The Register stated, “In the meanwhile what I am utilizing is that this new factor referred to as MiniMax and it is a distilled model of Claude. Would not matter that it is Chinese language. It is low cost and nearly as good as, if not higher, than Claude’s greatest fashions proper now.”

Whereas Anthropic prepares to go public, at the very least a few of the public goes elsewhere. ®

Source link