GTC DEEP DIVE At Nvidia’s GTC convention this week, CEO Jensen Huang lastly addressed a $20 billion query he’s dodged for months: Why spend a lot to license AI chip startup Groq’s tech and rent away its engineers relatively than construct it themselves?

As we have said before, if Nvidia needed to construct an SRAM-heavy inference accelerator, it did not want to purchase Groq to do it. The corporate’s newly introduced Groq 3 LPX racks, which pack 256 LP30 language processing models (LPUs) right into a single system, present time-to-market was the explanation Nvidia purchased relatively than constructed.

We’re instructed the chip relies on Groq’s second-gen LPU tech with a handful of last-minute tweaks made simply earlier than tapping out at Samsung’s fabs.

The chip does not use Nvidia’s proprietary NVLink interconnect, it lacks NVFP4 {hardware} help, and it is not CUDA-compatible at launch.

We are able to due to this fact conclude that the $20 billion paid to accumulate Groq’s mental property rights and engineering employees was a possibility value to get the chips out the door and into prospects’ fingers this yr.

Why the frenzy?

One of many defining traits of SRAM-heavy architectures from Groq and its rival Cerebras is that they’re very quick when operating LLM inferencing workloads, routinely attaining technology charges exceeding 500 and even 1000 tokens a second.

The sooner Nvidia can generate tokens, the sooner code assistants and AI brokers can act. However this type of velocity additionally opens the door to what Huang describes as test-time scaling.

The thought is that by letting “reasoning” fashions generate extra “considering” tokens, they’ll produce smarter, extra correct outcomes. So, the sooner you possibly can generate tokens, the much less of a latency penalty test-time scaling incurs.

On stage at GTC, Huang prompt that this high-performance and low-latency inference supplier may finally cost as a lot as $150 per million tokens for this functionality.

As you possibly can see, Nvidia’s GPUs are nice for producing bulk tokens, however as interactivity will increase, effectivity drops. – Click on to enlarge

Sadly for Nvidia, GPUs are nice for batch processing however do not scale almost as effectively as per-user-output speeds enhance. No less than not on their very own.

By combining its GPUs and Groq’s LPU tech, Nvidia goals to ship the perfect of each worlds: an inference platform that scales far more effectively at greater tokens per second per consumer.

On this graphic, the faint inexperienced and yellow strains present GPU and LPU scaling. By combining the 2, Nvidia goals to ship the perfect of each worlds — excessive throughput and interactivity. Picture Credit score: Nvidia – Click on to enlarge

Nvidia can also be underneath some strain to take care of its dominance of the AI infrastructure market as rival chip designers like AMD shut the hole on {hardware} and software program.

Final week, Amazon and Cerebras introduced a collaboration to pair AWS’ Trainium-3 accelerators with the latter’s wafer-scale accelerators for most of the identical causes Nvidia constructed LPX. In fact, AWS has additionally introduced plans to deploy greater than one million Nvidia GPUs along with fielding Nvidia-Groq LPUs, so the cloud large hasn’t all of the sudden began selecting sides.

The Groq-3 LPX

The LP30 could be very completely different from Nvidia’s GPUs. It is constructed by Samsung Electronics relatively than TSMC and makes use of solely on-chip SRAM. It additionally ditches the standard Von Neumann structure for one more generally known as information circulate.

Relatively than fetching directions from reminiscence, decoding, executing, after which writing that again to a register, information circulate architectures course of information because it’s streamed by the chip. The processor’s compute models do not have to attend for a bunch of load and retailer operations to shuffle information round, which, in principle ends in greater achievable utilization.

Based on Nvidia, every LP30 can ship 1.2 petaFLOPS of FP8 compute. However, as we talked about earlier, help for 4-bit block floating level information varieties, like MX or NV FP4, will not arrive till the LP35 arrives someday subsequent yr.

That compute is tied to a comparatively massive pool of SRAM reminiscence, which is orders of magnitude sooner than the high-bandwidth reminiscence (HBM) present in GPUs at the moment, however can also be extremely inefficient when it comes to area required.

Every LPU solely has sufficient die area for 500 MB of on-chip reminiscence. For comparability, simply one of many eight HBM4 modules on Nvidia’s Rubin GPUs accommodates 36GB of reminiscence. What the LP30 lacks in capability, it greater than makes up for in bandwidth, attaining speeds as much as 150 TB/s – almost 7 instances greater than Nvidia’s Rubin accelerators.

This makes LPUs excellent for the auto-regressive decode part of the inference pipeline, throughout which all of a mannequin’s lively parameters must be streamed from reminiscence for each token generated.

In fact, to do this, you’ll want to match the mannequin in reminiscence, which is not any straightforward job for the trillion-parameter fashions Nvidia is focusing on. For fashions this huge, a number of racks are required. Due to this, LP30 bristles with interconnects. Every chip options 96 of them – particularly, 112 Gbps SerDes – totalling 2.5 TB/s of bidirectional bandwidth.

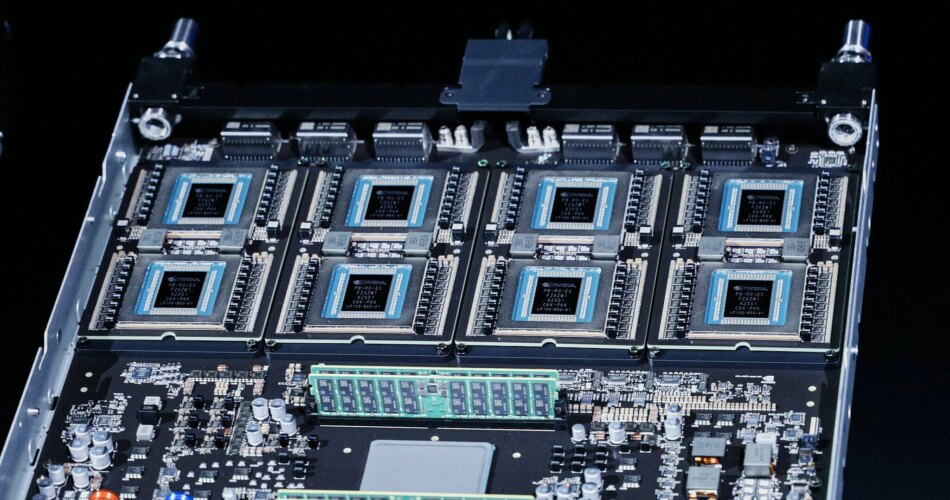

Every LPX rack is provided with 256 LPUs. These are unfold throughout 32 compute trays, every containing eight LPUs, some cloth enlargement logic and DRAM, and the host CPU and a BlueField-4 information processing unit (DPU).

Every LPU compute tray options eight liquid-cooled Groq-3 LPUs totalling 4GB of SRAM – Click on to enlarge

A few of that community connectivity is funnelled out the again of those blades into a brand new copper Ethernet backplane Nvidia calls the Oberon ETL256, whereas the rest is directed out the entrance of the system enabling a number of NVL72 and LPX racks to be stitched collectively.

Not a standalone half

Whereas it is fully potential to run massive language fashions (LLMs) fully on an LPX cluster, that is not how Nvidia is positioning the product.

This graphic reveals how inference workloads are distributed throughout GPUs and LPUs. Picture credit score: Nvidia – Click on to enlarge

As a substitute, a number of LPX racks is paired with a Vera-Rubin NVL72, which we discussed in more detail again when Nvidia confirmed it off in January, with numerous elements of the inference stack distributed throughout the GPUs and LPUs. Nvidia’s reference design has a comparatively small variety of GPUs dealing with the compute-heavy prompt-processing (prefill) part, whereas the bandwidth-intensive decode part, the place tokens are generated, is break up between a separate pool of GPUs and the LPUs.

Throughout this decode part, Nvidia takes benefit of GPUs’ comparatively massive reminiscence and compute capability to deal with the eye operations, whereas the bandwidth-constrained feed ahead neural community ops are offloaded to LPUs sitting within the LPX rack over Ethernet.

Nvidia’s Dynamo disaggregated inference platform handles orchestration for all of this.

What number of LPUs do you want?

The entire system requires plenty of LPUs.

The precise ratio of GPUs to LPUs depends upon the workload. Duties requiring extraordinarily massive contexts, batch sizes, or concurrency might have a bigger pool of GPUs. A general-purpose chatbot would possibly run nicely on a single rack.

It is because longer context home windows require extra reminiscence for the key-value (KV) caches that retailer mannequin state (suppose short-term reminiscence) and a spotlight operations. By retaining these on the GPU, Nvidia is ready to get by with fewer LPUs.

The precise variety of required LPUs is immediately proportional to the dimensions of a mannequin. For a trillion-parameter mannequin, that interprets to between 4 and eight LPX racks, or 1,024 to 2,048 LPUs, relying on whether or not the weights are saved in SRAM at 4-bit or 8-bit precision.

Who’s LPX for?

When you’re not a hyperscaler, neocloud, mannequin dev, LPX might be not for you. The sheer variety of LPUs required to serve massive open fashions will doubtless put Nvidia’s LPX platform out of attain for many enterprises.

Chatting with press forward of this week’s keynote, Buck stated Nvidia is focusing totally on mannequin builders and repair suppliers that have to serve trillion-plus-parameter fashions with token charges exceeding 500 to 1,000 a second.

Having stated that, in a technical blog, Nvidia introduced one other use case for the LPUs as a speculative decode accelerator, one thing we prompt the corporate would possibly do again in December.

Speculative decoding is a method for juicing inference efficiency by utilizing a smaller, extra performant “draft” mannequin to foretell the outputs of a bigger mannequin. When it really works, the method can velocity token technology by wherever from 2x to 3x.

And for the reason that method fails again to the bigger mannequin anytime it guesses incorrect, there isn’t any loss in high quality or accuracy.

Nvidia proposes internet hosting the draft mannequin on LPUs and the bigger goal mannequin on a set of GPUs. Since draft fashions are usually pretty small, this would possibly current a possibility for Nvidia to promote LPUs to enterprise prospects.

What occurred to Rubin CPX?

You could be scratching your head, questioning “wasn’t there imagined to be some type of particular Rubin chip optimized for large-context prefill processing?” You are not hallucinating.

Again at Computex final northern spring, Nvidia unveiled the Rubin CPX, a model of Rubin that used slower, inexpensive GDDR7 reminiscence to hurry up the time to first token – how lengthy customers or brokers have to attend for the mannequin to start out producing an output – when working with massive inputs.

The thought was that Rubin CPX may minimize down on wait instances for functions that may contain processing massive portions of paperwork, liberating up the non-CPX Rubins, and rushing up total decode instances.

Nvidia’s Vera Rubin NVL144 CPX compute trays will now pack 16 GPUs. Eight with HBM and one other eight context optimized ones utilizing GDDR7 – Click on to enlarge

Nevertheless, by early 2026, Nvidia stopped mentioning CPX. This week, we realized the undertaking had been placed on the again burner so Nvidia can prioritize LPX.

It is vital to notice that LPX shouldn’t be a alternative for CPX. The 2 platforms have been designed to speed up reverse ends of the inference pipeline: LPUs are designed to hurry up token technology throughout the decode part, whereas CPX was supposed to chop the time customers or brokers spent ready for the mannequin to reply throughout prefill.

Nvidia hasn’t given up on the idea both. Ian Buck, VP of Hyperscale and HPC at Nvidia, instructed press that CPX continues to be a good suggestion and that we might even see the idea resurface in future generations.

An alphabet soup of rack scale architectures

Whereas LPX is probably the most fascinating addition to Nvidia’s rack-scale lineup, it isn’t the one one.

At GTC, Nvidia additionally unveiled three extra rack-scale designs, one every for networking, storage, and agentic compute.

We checked out Nvidia’s new Vera CPU racks in more detail earlier this week, however the system makes use of the identical ETL community backplane as its LPX racks and HGX programs, and options 32 compute blades, every with eight 88-core Vera CPUs and as much as 12 TB of LPDDR5X SOCAMM reminiscence modules on board.

Along with serving because the host processor on Nvidia’s newest technology merchandise, the Vera CPU rack is meant as an execution atmosphere for agentic programs, like Open Claw, that require high-memory bandwidth and single-threaded efficiency.

Alongside the CPU racks is a brand new storage rack known as the BlueField-4 STX. Because the identify suggests, the reference design combines Nvidia’s BlueField-4 information processing models (DPUs, aka SmartNICs) with a Vera CPU and ConnectX-9 NICs. Nvidia intends this providing to function a KV-cache offload goal.

Any time an LLM processes a immediate, it generates KV caches that retailer the mannequin state in vectors. By retaining these pre-computed vectors in GPU or system reminiscence, or flash storage, solely new tokens should be computed whereas repeating ones could be recycled from cache.

Earlier this yr, Nvidia showed off its context-memory storage platform, which is supposed to automate the offload of KV caches to suitable storage targets. The AI infrastructure large claims that this method can enhance token charges by as much as 5x by liberating up GPU sources to deal with different parts of the inference pipeline.

Lastly, there’s the Spectrum-6 SPX community rack, which additionally leverages the MGX ETL reference design to simplify cabling of Spectrum-X and Quantum-X switches.

Collectively, these rack programs type a type of meeting line. Consider it this manner: Vera CPU racks operating AI brokers make API calls to fashions operating Vera-Rubin NVL72 programs with Groq LPX decode accelerators. KV caches generated by these brokers are offloaded to STX storage, and all the pieces is related to 1 one other by SPX racks filled with Spectrum or Quantum switches. And so long as the AI increase continues, Nvidia retains printing cash. ®

Source link