Meta this week introduced a sweeping set of anti-scam measures throughout Fb, Messenger, and WhatsApp, combining new synthetic intelligence detection instruments, expanded advertiser verification necessities, and a sequence of legislation enforcement partnerships that resulted in arrests and the disabling of lots of of hundreds of accounts. The announcement, printed March 11, 2026, on Meta’s official newsroom, arrives at a charged second for the corporate’s relationship with the fraud downside on its platforms.

The size of the problem is important. In keeping with the announcement, Meta eliminated over 159 million rip-off adverts in 2025 for violating its insurance policies – up from the 134 million scam ads the company reported removing as of its December 3, 2025 International Anti-Rip-off Summit disclosure. Of these 159 million, in accordance with Meta, 92% had been taken down earlier than anybody reported them to the corporate. That determine displays a big enchancment in proactive detection. On the similar time, Meta says it took down 10.9 million accounts on Fb and Instagram related to prison rip-off facilities.

The announcement follows months of sustained scrutiny. Inner firm paperwork reviewed by Reuters in November 2025 indicated that Meta’s platforms had been exposing users to an estimated 15 billion higher-risk scam advertisements daily, and that Meta internally projected incomes roughly 10% of its 2024 annual income – roughly $16 billion – from ads selling scams and banned items. The corporate has disputed components of that characterization.

New AI detection capabilities

The technical coronary heart of immediately’s announcement facilities on AI methods designed to catch deception that conventional rule-based detection misses. In keeping with Meta, the corporate’s engineers constructed methods able to analyzing a number of indicators concurrently – textual content, photographs, and surrounding context – to establish “a broader vary of extra refined rip-off patterns quicker and at scale.”

Two particular AI-powered capabilities stand out. The primary targets movie star and model impersonation, a class that has develop into one of the profitable vectors for fraud on social platforms. In keeping with Meta, AI now helps analyze pretend fan sentiment, deceptive biographical info, and obvious associations with public figures or manufacturers. The system can, in accordance with the corporate, course of “way more contextual details about public figures, enhancing our capacity to catch misleading impersonations.” This builds on the facial recognition method Meta described on the International Anti-Rip-off Summit in December 2025, which more than doubled the volume of fraudulent ads detected throughout testing phases.

The second functionality addresses misleading hyperlinks and area impersonation. Meta states it makes use of superior AI to proactively detect content material that redirects customers to webpages designed to imitate reputable ones – a tactic frequent in phishing and funding fraud. The expertise, in accordance with the announcement, identifies “a broader vary of misleading behaviors with increased precision to guard hundreds of manufacturers in opposition to impersonation.”

Consumer-facing warnings: Fb, WhatsApp, and Messenger

Past back-end detection, Meta is introducing or increasing three user-facing instruments. The primary is a Fb function that generates alerts when customers ship or obtain a pal request from an account displaying sure indicators of suspicious exercise – as an illustration, when there are few mutual pals or when the account signifies a unique nation location in its profile. The function is described as presently in testing.

The second targets a selected WhatsApp assault vector. In keeping with the announcement, scammers have been tricking customers into linking their WhatsApp accounts to attacker-controlled gadgets, generally by posing as expertise competitions asking folks to enter a cellphone quantity and a linking code, or by presenting fraudulent QR codes. WhatsApp will now generate alerts when behavioral indicators counsel a device-linking request could be suspicious. The warning reveals the place the request originates and flags it as a possible rip-off earlier than the hyperlink is made.

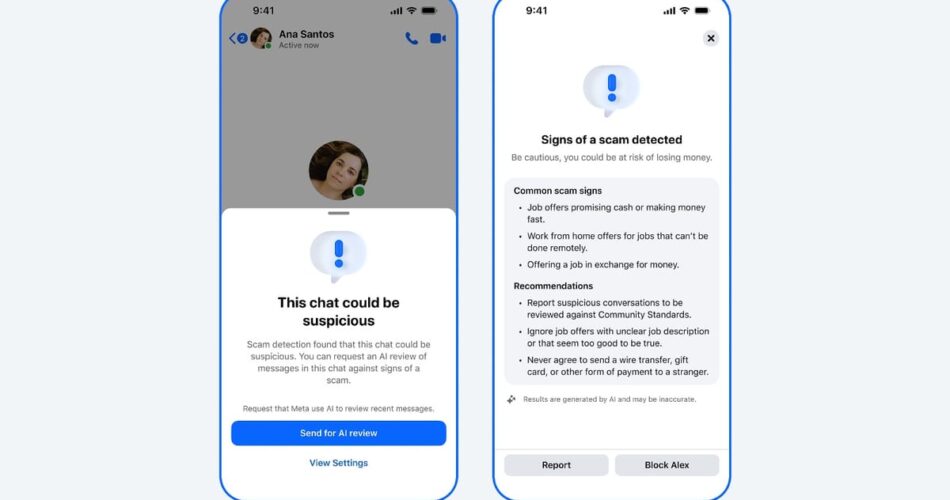

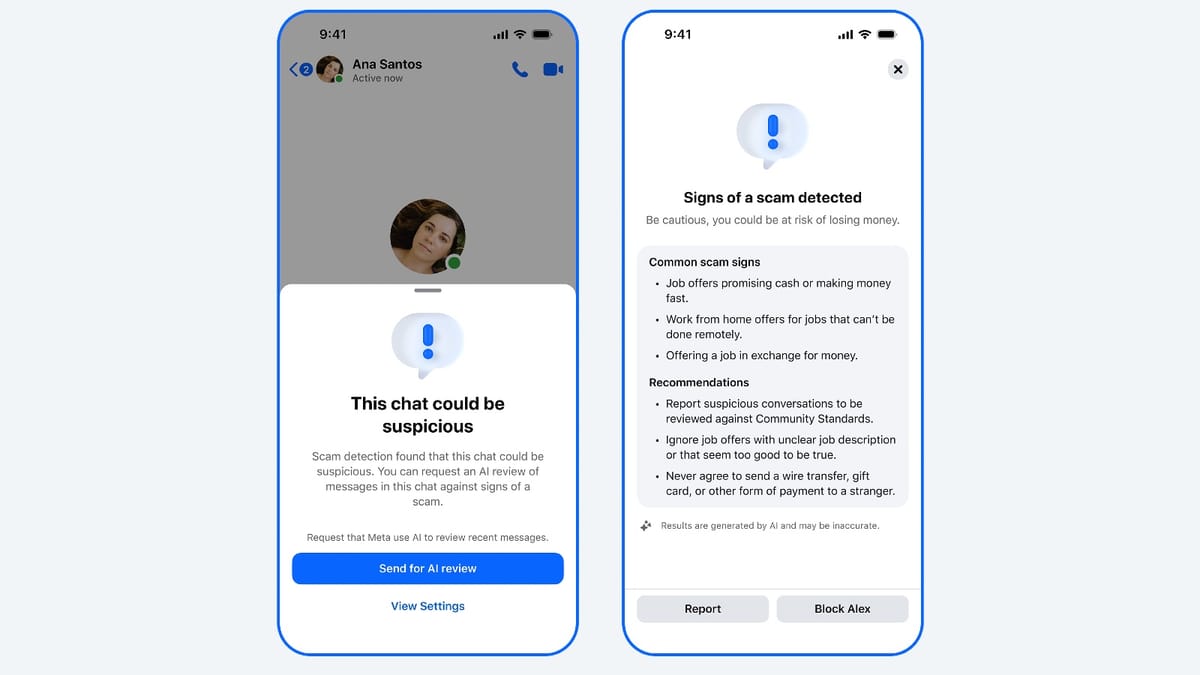

The third is an enlargement of superior rip-off detection on Messenger. Meta states it’s rolling out this technique to extra international locations throughout March 2026. When a brand new contact’s chat accommodates patterns in keeping with frequent scams – akin to suspicious job presents – customers are warned and invited to share current chat messages for an AI rip-off assessment. If a possible rip-off is detected, the platform supplies info on frequent rip-off varieties and suggests actions together with blocking or reporting the account.

Advertiser verification: from 70% to 90%

Maybe probably the most consequential change for the promoting group particularly is the enlargement of advertiser verification. In keeping with immediately’s announcement, Meta is working to make sure that verified advertisers drive 90% of its adverts income by the tip of 2026, up from the present 70%. The remaining 10% would come from what Meta describes as low-risk companies.

The enlargement covers the highest-risk promoting classes. In keeping with the announcement, “The verification course of helps promote higher transparency, limiting makes an attempt to misrepresent advertiser id.” Meta frames this as one layer in a multi-layered method to platform security, not a standalone answer.

For context, Meta has been increasing verification necessities selectively throughout markets for a while. The corporate introduced verification requirements for advertisers targeting Thailand in October 2025, requiring affected advertisers to confirm each the beneficiary and payer of their campaigns, with outcomes displayed within the Advert Library. That coverage gave advertisers a seven-day window to conform or lose the power to ship adverts to Thai customers.

The 90% goal represents a significant shift. In the present day, roughly 30% of Meta’s advert income flows by means of unverified advertisers – a pool giant sufficient to embody important fraud publicity. The corporate has not disclosed the particular verification methodology it would apply to achieve the brand new threshold, nor has it specified which promoting classes past present high-risk areas can be introduced into scope.

Legislation enforcement actions: 150,000 accounts, 21 arrests

In the present day’s announcement additionally particulars enforcement exercise carried out alongside international legislation enforcement businesses. In keeping with Meta, a Joint Disruption Week involving the FBI, the Division of Justice Rip-off Heart Strike Power, the Royal Thai Police Anti-Cyber Rip-off Heart, and different international legislation enforcement businesses resulted in Meta investigators disabling over 150,000 accounts related to rip-off heart networks. The identical operation contributed to 21 arrestsmade by the Royal Thai Police.

This represents the second Joint Disruption Week with the Royal Thai Police since December 2025. In keeping with Meta, the primary such operation, in December, led to the removing of over 59,000 Fb pages and accounts linked to cash laundering operations and unlawful recruitment schemes.

A separate enforcement motion used Meta’s Fraud Intelligence and Reciprocal Change program, often known as FIRE. By that mechanism, Meta eliminated, disabled, and unpublished greater than 15,000 property on Fb and Instagram. These property, in accordance with the announcement, used misleading personas claiming to be Japanese ladies searching for relationships with millennials and older males, with some accounts additionally selling gambling-related content material.

A 3rd operation concerned collaboration between Meta, the Nigeria Police Power, and the UK Nationwide Crime Company. In keeping with the announcement, the joint effort led to the arrest of seven suspects allegedly concerned in a rip-off heart in Agbor, Delta state, Nigeria. The syndicate focused British and Americans based mostly within the UK, utilizing pretend social media accounts impersonating cryptocurrency merchants and Fb teams.

These enforcement efforts carry direct continuity from Meta’s February 26, 2026 lawsuits against deceptive advertisers in Brazil, China, and Vietnam, which focused celeb-bait and cloaking operators and included cease-and-desist letters to eight former Meta Enterprise Companions accused of promoting enforcement evasion companies.

Consciousness campaigns

The announcement covers 4 public consciousness campaigns working in parallel with the technical and enforcement measures.

The #TrappedinScamCrime marketing campaign, developed in partnership with the United Nations Workplace on Medication and Crime, the Worldwide Justice Mission, and the US Division of State, has launched throughout Vietnam, Thailand, Laos, Cambodia, Myanmar, Indonesia, Malaysia, and the Philippines. The marketing campaign addresses on-line recruitment and human trafficking for compelled criminality – a class tied to the rip-off compound operations Meta has been disrupting.

The Rip-off Se Bacho marketing campaign, a year-long initiative working in India with the Indian Cyber Crime Coordination Centre and the Securities Change Board of India, options Indian actor Neena Gupta. Academic campaigns in Brazil and Mexico, developed in partnership with the Brazilian Federation of Banks and Mexico’s shopper safety company Profeco, targeted on rip-off consciousness throughout Cybersecurity Consciousness Month and the vacation season.

Context for advertising and marketing professionals

The promoting trade is watching Meta’s anti-scam measures intently, partly due to what they suggest for reputable advertisers and partly due to the context through which they’re being introduced. The IAB Sweden expelled Meta from its membership on March 12, 2026, ruling that the platform’s work in opposition to misleading promoting was inadequate – a uncommon commerce physique sanction that adopted the November 2025 Reuters disclosures about Meta’s inner rip-off advert income projections.

For advertisers, the verification enlargement carries sensible implications. The advertiser support infrastructure at Meta has drawn criticism from practitioners who discover themselves unable to resolve account points by means of automated methods. Increasing the scope of verification necessities raises questions on what occurs when reputable advertisers fail to finish verification appropriately, and whether or not ample assist will exist to resolve ensuing disputes.

The expert reaction to the Reuters documents in November 2025 highlighted the stress between platform scale and enforcement precision. At roughly 15 billion day by day advert impressions throughout Meta’s properties, distinguishing fraudulent from reputable promoting at 95% certainty thresholds means a significant fraction of fraud passes by means of. The brand new instruments and the raised verification goal signify Meta’s said reply to that hole. Whether or not the operational execution matches the introduced ambition stays to be seen.

Meta’s 90% verification goal additionally supplies a measurable benchmark the trade can observe. The corporate has set an specific end-of-2026 deadline. That specificity, in contrast to many platform anti-fraud commitments, creates an outlined accountability level.

Timeline

- February 12, 2025 – Meta announces comprehensive romance scam prevention measures, reporting 408,000 accounts removed in 2024

- August 20, 2025 – Former Meta product manager files whistleblower complaint alleging artificial ROAS inflation for Shops ads

- October 27, 2025 – Meta announces expanded advertiser verification requirements for Thailand campaigns

- November 6, 2025 – Reuters publishes inner Meta paperwork; PPC Land reports Meta earned billions from scam ads through a penalty bidding system

- November 30, 2025 – Industry expert commentary on the Reuters scam ad findings published

- December 3, 2025 – Meta reports removal of 134 million scam ads at the Global Anti-Scam Summit in Washington, DC

- December 2025 – First Joint Disruption Week with the Royal Thai Police; 59,000 Fb pages and accounts eliminated

- January 15, 2026 – Meta advertiser support failures documented, raising concerns about verification dispute resolution

- January 22, 2026 – Instagram product scam case study illustrates continued gaps in Meta’s ad verification

- February 26, 2026 – Meta files lawsuits against scam advertisers in Brazil, China, and Vietnam for celeb-bait and cloaking

- March 11, 2026 – Meta broadcasts new AI rip-off detection instruments, WhatsApp device-linking warnings, expanded advertiser verification to 90%, and second Joint Disruption Week leading to 150,000 accounts disabled and 21 arrests by the Royal Thai Police

- March 12, 2026 – IAB Sweden expels Meta from membership over insufficient action against deceptive ads

Abstract

Who: Meta Platforms, working Fb, Messenger, and WhatsApp, working alongside the FBI, the US Division of Justice Rip-off Heart Strike Power, the Royal Thai Police Anti-Cyber Rip-off Heart, the Nigeria Police Power, the UK Nationwide Crime Company, and consciousness marketing campaign companions together with the UNODC, IJM, the US Division of State, India’s I4C and SEBI, Febraban in Brazil, and Profeco in Mexico.

What: Meta launched new AI-powered rip-off detection instruments focusing on movie star impersonation and area spoofing, launched user-facing warnings on Fb for suspicious pal requests and on WhatsApp for suspicious device-linking makes an attempt, expanded Messenger rip-off detection to extra international locations, and introduced a plan to lift the share of advert income from verified advertisers from 70% to 90% by finish of 2026. Concurrent legislation enforcement operations disabled over 150,000 accounts related to rip-off heart networks and contributed to 21 arrests.

When: The announcement was printed March 11, 2026. The second Joint Disruption Week with the Royal Thai Police happened in early 2026, constructing on a primary operation in December 2025. The 90% advertiser verification goal is ready for the tip of 2026.

The place: The instruments and options apply throughout Meta’s international platforms – Fb, Messenger, and WhatsApp. Legislation enforcement operations targeted on rip-off heart networks, with arrests made in Thailand and disruption of a rip-off heart in Agbor, Delta state, Nigeria. Consciousness campaigns are working throughout eight international locations in Southeast Asia and in India, Brazil, and Mexico.

Why: Meta states that prison rip-off networks are rising in sophistication, industrializing fraud at scale throughout platforms and borders. The corporate faces intensifying regulatory scrutiny and commerce physique strain following inner doc disclosures in late 2025 that exposed the dimensions of fraudulent promoting on its platforms. The brand new measures signify Meta’s said effort to display energetic enforcement, develop transparency by means of advertiser verification, and work with legislation enforcement to handle fraud that transcends any single platform or jurisdiction.

Share this text