The idea of Compressibility as a top quality sign isn’t broadly recognized, however SEOs ought to concentrate on it. Serps can use net web page compressibility to determine duplicate pages, doorway pages with comparable content material, and pages with repetitive key phrases, making it helpful information for search engine optimisation.

Though the next analysis paper demonstrates a profitable use of on-page options for detecting spam, the deliberate lack of transparency by search engines like google and yahoo makes it troublesome to say with certainty if search engines like google and yahoo are making use of this or comparable strategies.

What Is Compressibility?

In computing, compressibility refers to how a lot a file (information) might be shrunk whereas retaining important data, sometimes to maximise cupboard space or to permit extra information to be transmitted over the Web.

TL/DR Of Compression

Compression replaces repeated phrases and phrases with shorter references, lowering the file measurement by vital margins. Serps sometimes compress listed net pages to maximise cupboard space, cut back bandwidth, and enhance retrieval velocity, amongst different causes.

It is a simplified clarification of how compression works:

- Establish Patterns:

A compression algorithm scans the textual content to seek out repeated phrases, patterns and phrases - Shorter Codes Take Up Much less House:

The codes and symbols use much less cupboard space then the unique phrases and phrases, which leads to a smaller file measurement. - Shorter References Use Much less Bits:

The “code” that basically symbolizes the changed phrases and phrases makes use of much less information than the originals.

A bonus impact of utilizing compression is that it will also be used to determine duplicate pages, doorway pages with comparable content material, and pages with repetitive key phrases.

Analysis Paper About Detecting Spam

This analysis paper is critical as a result of it was authored by distinguished pc scientists recognized for breakthroughs in AI, distributed computing, data retrieval, and different fields.

Marc Najork

One of many co-authors of the analysis paper is Marc Najork, a distinguished analysis scientist who at present holds the title of Distinguished Analysis Scientist at Google DeepMind. He’s a co-author of the papers for TW-BERT, has contributed research for increasing the accuracy of using implicit user feedback like clicks, and labored on creating improved AI-based data retrieval (DSI++: Updating Transformer Memory with New Documents), amongst many different main breakthroughs in data retrieval.

Dennis Fetterly

One other of the co-authors is Dennis Fetterly, at present a software program engineer at Google. He’s listed as a co-inventor in a patent for a ranking algorithm that uses links, and is thought for his analysis in distributed computing and knowledge retrieval.

These are simply two of the distinguished researchers listed as co-authors of the 2006 Microsoft analysis paper about figuring out spam by means of on-page content material options. Among the many a number of on-page content material options the analysis paper analyzes is compressibility, which they found can be utilized as a classifier for indicating that an internet web page is spammy.

Detecting Spam Net Pages By Content material Evaluation

Though the analysis paper was authored in 2006, its findings stay related to at this time.

Then, as now, folks tried to rank lots of or hundreds of location-based net pages that had been basically duplicate content material except for metropolis, area, or state names. Then, as now, SEOs usually created net pages for search engines like google and yahoo by excessively repeating key phrases inside titles, meta descriptions, headings, inner anchor textual content, and throughout the content material to enhance rankings.

Part 4.6 of the analysis paper explains:

“Some search engines like google and yahoo give greater weight to pages containing the question key phrases a number of occasions. For instance, for a given question time period, a web page that incorporates it ten occasions could also be greater ranked than a web page that incorporates it solely as soon as. To make the most of such engines, some spam pages replicate their content material a number of occasions in an try to rank greater.”

The analysis paper explains that search engines like google and yahoo compress net pages and use the compressed model to reference the unique net web page. They notice that extreme quantities of redundant phrases ends in a better stage of compressibility. So that they set about testing if there’s a correlation between a excessive stage of compressibility and spam.

They write:

“Our method on this part to finding redundant content material inside a web page is to compress the web page; to save lots of area and disk time, search engines like google and yahoo usually compress net pages after indexing them, however earlier than including them to a web page cache.

…We measure the redundancy of net pages by the compression ratio, the dimensions of the uncompressed web page divided by the dimensions of the compressed web page. We used GZIP …to compress pages, a quick and efficient compression algorithm.”

Excessive Compressibility Correlates To Spam

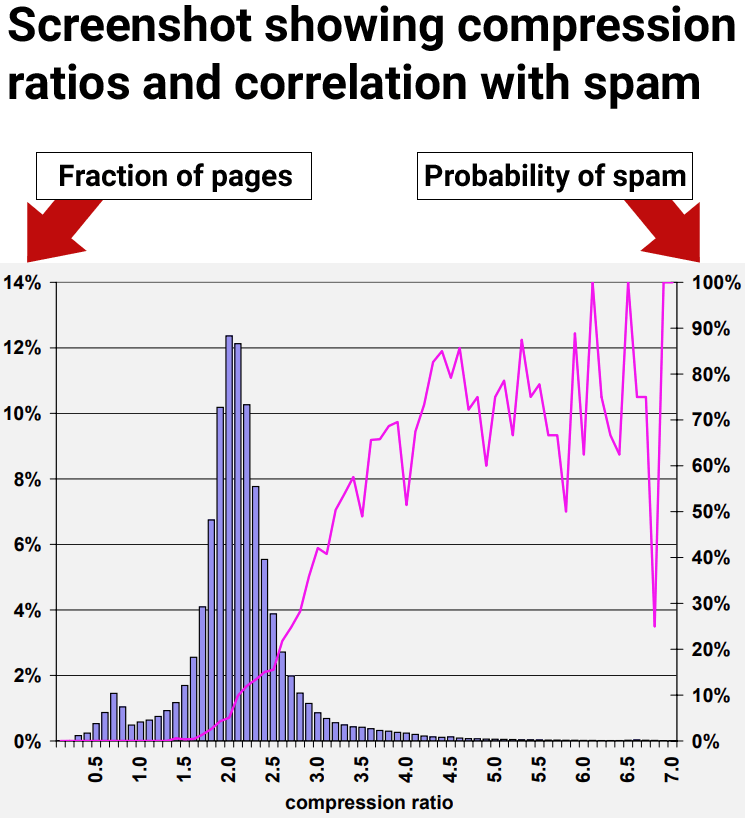

The outcomes of the analysis confirmed that net pages with at the least a compression ratio of 4.0 tended to be low high quality net pages, spam. Nonetheless, the very best charges of compressibility turned much less constant as a result of there have been fewer information factors, making it more durable to interpret.

Determine 9: Prevalence of spam relative to compressibility of web page.

The researchers concluded:

“70% of all sampled pages with a compression ratio of at the least 4.0 had been judged to be spam.”

However in addition they found that utilizing the compression ratio by itself nonetheless resulted in false positives, the place non-spam pages had been incorrectly recognized as spam:

“The compression ratio heuristic described in Part 4.6 fared finest, accurately figuring out 660 (27.9%) of the spam pages in our assortment, whereas misidentifying 2, 068 (12.0%) of all judged pages.

Utilizing the entire aforementioned options, the classification accuracy after the ten-fold cross validation course of is encouraging:

95.4% of our judged pages had been categorized accurately, whereas 4.6% had been categorized incorrectly.

Extra particularly, for the spam class 1, 940 out of the two, 364 pages, had been categorized accurately. For the non-spam class, 14, 440 out of the 14,804 pages had been categorized accurately. Consequently, 788 pages had been categorized incorrectly.”

The subsequent part describes an fascinating discovery about easy methods to improve the accuracy of utilizing on-page indicators for figuring out spam.

Perception Into High quality Rankings

The analysis paper examined a number of on-page indicators, together with compressibility. They found that every particular person sign (classifier) was capable of finding some spam however that counting on anybody sign by itself resulted in flagging non-spam pages for spam, that are generally known as false optimistic.

The researchers made an essential discovery that everybody thinking about search engine optimisation ought to know, which is that utilizing a number of classifiers elevated the accuracy of detecting spam and decreased the chance of false positives. Simply as essential, the compressibility sign solely identifies one type of spam however not the complete vary of spam.

The takeaway is that compressibility is an effective option to determine one type of spam however there are different kinds of spam that aren’t caught with this one sign. Different kinds of spam weren’t caught with the compressibility sign.

That is the half that each search engine optimisation and writer ought to concentrate on:

“Within the earlier part, we introduced plenty of heuristics for assaying spam net pages. That’s, we measured a number of traits of net pages, and located ranges of these traits which correlated with a web page being spam. Nonetheless, when used individually, no method uncovers a lot of the spam in our information set with out flagging many non-spam pages as spam.

For instance, contemplating the compression ratio heuristic described in Part 4.6, considered one of our most promising strategies, the typical chance of spam for ratios of 4.2 and better is 72%. However solely about 1.5% of all pages fall on this vary. This quantity is way beneath the 13.8% of spam pages that we recognized in our information set.”

So, though compressibility was one of many higher indicators for figuring out spam, it nonetheless was unable to uncover the complete vary of spam throughout the dataset the researchers used to check the indicators.

Combining A number of Alerts

The above outcomes indicated that particular person indicators of low high quality are much less correct. So that they examined utilizing a number of indicators. What they found was that combining a number of on-page indicators for detecting spam resulted in a greater accuracy price with much less pages misclassified as spam.

The researchers defined that they examined the usage of a number of indicators:

“A method of mixing our heuristic strategies is to view the spam detection downside as a classification downside. On this case, we need to create a classification mannequin (or classifier) which, given an internet web page, will use the web page’s options collectively as a way to (accurately, we hope) classify it in considered one of two courses: spam and non-spam.”

These are their conclusions about utilizing a number of indicators:

“We’ve studied varied features of content-based spam on the internet utilizing a real-world information set from the MSNSearch crawler. We’ve introduced plenty of heuristic strategies for detecting content material based mostly spam. A few of our spam detection strategies are more practical than others, nevertheless when utilized in isolation our strategies could not determine the entire spam pages. Because of this, we mixed our spam-detection strategies to create a extremely correct C4.5 classifier. Our classifier can accurately determine 86.2% of all spam pages, whereas flagging only a few reputable pages as spam.”

Key Perception:

Misidentifying “only a few reputable pages as spam” was a big breakthrough. The essential perception that everybody concerned with search engine optimisation ought to take away from that is that one sign by itself can lead to false positives. Utilizing a number of indicators will increase the accuracy.

What this implies is that search engine optimisation exams of remoted rating or high quality indicators won’t yield dependable outcomes that may be trusted for making technique or enterprise selections.

Takeaways

We don’t know for sure if compressibility is used at the major search engines nevertheless it’s a simple to make use of sign that mixed with others may very well be used to catch easy sorts of spam like hundreds of metropolis title doorway pages with comparable content material. But even when the major search engines don’t use this sign, it does present how straightforward it’s to catch that type of search engine manipulation and that it’s one thing search engines like google and yahoo are effectively in a position to deal with at this time.

Listed below are the important thing factors of this text to remember:

- Doorway pages with duplicate content material is straightforward to catch as a result of they compress at a better ratio than regular net pages.

- Teams of net pages with a compression ratio above 4.0 had been predominantly spam.

- Unfavourable high quality indicators utilized by themselves to catch spam can result in false positives.

- On this specific take a look at, they found that on-page unfavorable high quality indicators solely catch particular kinds of spam.

- When used alone, the compressibility sign solely catches redundancy-type spam, fails to detect different types of spam, and results in false positives.

- Combing high quality indicators improves spam detection accuracy and reduces false positives.

- Serps at this time have a better accuracy of spam detection with the usage of AI like Spam Mind.

Learn the analysis paper, which is linked from the Google Scholar web page of Marc Najork:

Detecting spam web pages through content analysis

Featured Picture by Shutterstock/pathdoc

Source link