Whereas on-line dialogue obsesses over whether or not ChatGPT spells the tip of Google, web sites are dropping income from a much more actual and instant downside: a few of their most precious pages are invisible to the methods that matter.

As a result of whereas the bots have modified, the sport hasn’t. Your web site content material must be crawlable.

Between Could 2024 and Could 2025, AI crawler traffic surged by 96%, with GPTBot’s share leaping from 5% to 30%. However this development isn’t changing conventional search visitors.

Semrush’s analysis of 260 billion rows of clickstream data confirmed that individuals who begin utilizing ChatGPT keep their Google search habits. They’re not switching; they’re increasing.

This implies enterprise websites must fulfill each conventional crawlers and AI methods, whereas sustaining the identical crawl finances they’d earlier than.

The dilemma: Crawl quantity vs. income affect

Many corporations get crawlability mistaken due specializing in what we are able to simply measure (complete pages crawled) slightly than what truly drives income (which pages get crawled).

When Cloudflare analyzed AI crawler conduct, they found a troubling inefficiency. For instance, for each customer Anthropic’s Claude refers again to web sites, ClaudeBot crawls tens of thousands of pages. This unbalanced crawl-to-referral ratio reveals a basic asymmetry of contemporary search: huge consumption, minimal visitors return.

That’s why it’s crucial for crawl budgets to be successfully directed in the direction of your most precious pages. In lots of circumstances, the issue isn’t about having too many pages. It’s in regards to the mistaken pages consuming your crawl finances.

The PAVE framework: Prioritizing for income

The PAVE framework helps handle crawlability throughout each search channels. It affords 4 dimensions that decide whether or not a web page deserves crawl finances:

- P – Potential: Does this web page have reasonable rating or referral potential? Not all pages needs to be crawled. If a web page isn’t conversion-optimized, offers skinny content material, or has minimal rating potential, you’re losing crawl finances that might go to value-generating pages.

- A – Authority: The markers are acquainted for Google, however as proven in Semrush Enterprise’s AI Visibility Index, in case your content material lacks ample authority indicators – like clear E-E-A-T, area credibility – AI bots may even skip it.

- V – Worth: How a lot distinctive, synthesizable data exists per crawl request? Pages requiring JavaScript rendering take 9x longer to crawl than static HTML. And bear in mind: JavaScript can also be skipped by AI crawlers.

- E – Evolution: How typically does this web page change in significant methods? Crawl demand will increase for pages that replace incessantly with worthwhile content material. Static pages get deprioritized robotically.

Server-side rendering is a income multiplier

JavaScript-heavy websites are paying a 9x rendering tax on their crawl finances in Google. And most AI crawlers don’t execute JavaScript. They seize uncooked HTML and transfer on.

If you happen to’re counting on client-side rendering (CSR), the place content material assembles within the browser after JavaScript runs, you’re hurting your crawl finances.

Server-side rendering (SSR) flips the equation fully.

With SSR, your internet server pre-builds the total HTML earlier than sending it to browsers or bots. No JavaScript execution wanted to entry predominant content material. The bot will get wanted within the first request. Product names, pricing, and descriptions are all instantly seen and indexable.

However right here’s the place SSR turns into a real income multiplier: this added velocity doesn’t simply assist bots, but in addition dramatically improves conversion charges.

Deloitte’s analysis with Google discovered {that a} mere 0.1 second enchancment in cell load time drives:

- 8.4% improve in retail conversions

- 10.1% improve in journey conversions

- 9.2% improve in common order worth for retail

SSR makes pages load sooner for customers and bots as a result of the server does the heavy lifting as soon as, then serves the pre-rendered end result to everybody. No redundant client-side processing. No JavaScript execution delays. Simply quick, crawlable, convertible pages.

For enterprise websites with thousands and thousands of pages, SSR is likely to be a key consider whether or not bots and customers truly see – and convert on – your highest-value content material.

The disconnected information hole

Many companies are flying blind because of disconnected information.

- Crawl logs stay in a single system.

- Your website positioning rank monitoring lives in one other.

- Your AI search monitoring in a 3rd.

This makes it practically unattainable to definitively reply the query: “Which crawl points are costing us income proper now?”

This fragmentation creates a compounding price of constructing choices with out full data. On daily basis you use with siloed information, you danger optimizing for the mistaken priorities.

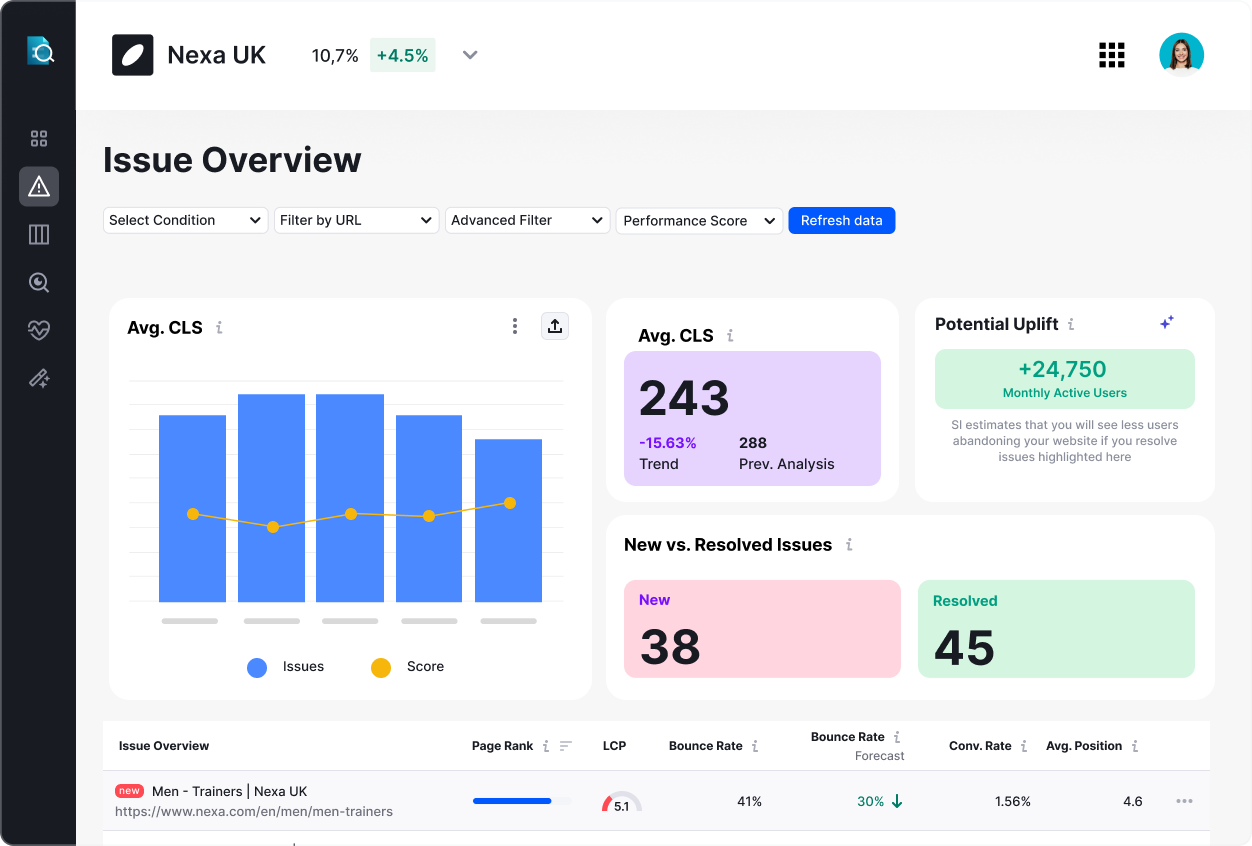

The companies that clear up crawlability and handle their web site well being at scale don’t simply gather extra information. They unify crawl intelligence with search efficiency information to create a whole image.

When groups can phase crawl information by enterprise models, evaluate pre- and post-deployment efficiency side-by-side, and correlate crawl well being with precise search visibility, you rework crawl finances from a technical thriller right into a strategic lever.

1. Conduct a crawl audit utilizing the PAVE framework

Use Google Search Console’s Crawl Stats report alongside log file evaluation to establish which URLs devour probably the most crawl finances. However right here’s the place most enterprises hit a wall: Google Search Console wasn’t constructed for advanced, multi-regional websites with thousands and thousands of pages.

That is the place scalable web site well being administration turns into essential. International groups want the flexibility to phase crawl information by areas, product strains, or languages to see precisely which elements of your web site are burning finances as an alternative of pushing conversions. Precision segmentation capabilities that Semrush Enterprise’s Site Intelligence permits.

Upon getting an outline, apply the PAVE framework: if a web page scores low on all 4 dimensions, contemplate blocking it from crawls or consolidating it with different content material.

Targeted optimization through enhancing inside linking, fixing web page depth points, and updating sitemaps to incorporate solely indexable URLs may yield big dividends.

2. Implement steady monitoring, not periodic audits

Most companies conduct quarterly or annual audits, taking a snapshot in time and calling it a day.

However crawl finances and wider web site well being issues don’t wait to your audit schedule. A deployment on Tuesday can silently go away key pages invisible on Wednesday, and also you gained’t uncover it till your subsequent evaluation. After weeks of income loss.

The answer is implementing monitoring that catches points earlier than they compound. When you possibly can align audits with deployments, observe your web site traditionally, and evaluate releases or environments side-by-side, you progress from reactive fireplace drills right into a proactive income safety system.

3. Systematically construct your AI authority

AI search operates in levels. When customers analysis normal matters (“finest waterproof climbing boots”), AI synthesizes from evaluation websites and comparability content material. However when customers examine particular manufacturers or merchandise (“are Salomon X Extremely waterproof, and the way a lot do they price?”) AI shifts its analysis strategy fully.

Your official web site turns into the first supply. That is the authority recreation, and most enterprises are dropping it by neglecting their foundational data structure.

Right here’s a fast guidelines:

- Guarantee your product descriptions are factual, complete, and ungated (no JavaScript-heavy content material)

- Clearly state important data like pricing in static HTML

- Use structured information markup for technical specs

- Add characteristic comparisons to your area, don’t depend on third-party websites

Visibility is profitability

Your crawl finances downside is known as a income recognition downside disguised as a technical problem.

On daily basis that high-value pages are invisible is a day of misplaced aggressive positioning, missed conversions, and compounding income loss.

With search crawler visitors surging, and ChatGPT now reporting over 700 million daily users, the stakes have by no means been greater.

The winners gained’t be these with probably the most pages or probably the most subtle content material, however those that optimize web site well being so bots attain their highest-value pages first.For enterprises managing thousands and thousands of pages throughout a number of areas, contemplate how unified crawl intelligence—combining deep crawl information with search efficiency metrics—can rework your web site well being administration from a technical headache right into a income safety system. Study extra about Site Intelligence by Semrush Enterprise.

Opinions expressed on this article are these of the sponsor. MarTech neither confirms nor disputes any of the conclusions introduced above.

Source link