Why decisioning is being measured by defensibility, not simply efficiency.

As AI decisioning turns into embedded in core workflows, the usual is altering.

Choices aren’t made inside a single crew or silo. They transfer throughout programs, triggering outcomes as they go, approvals, danger classifications, buyer interactions, steadily with out pause between analysis and execution. What begins as a single enter develops into a part of a broader chain of choices, every constructing on the final. And because the chain grows, so does the expectation each step inside it may be understood and justified.

The query is not simply what occurred.

It’s why it occurred that means.

And extra importantly: can that reasoning be defended later underneath board and regulatory scrutiny.

As soon as choices must be defined, identification stops being one other enter in a system; it turns into the idea for whether or not these choices might be justified in any respect.

When Choices Don’t Sit Nonetheless

There was a time when choices had room to settle.

A flagged transaction could possibly be reviewed earlier than motion was taken, a declined utility could possibly be revisited with further context, a questionable mannequin output could possibly be paused, examined, and corrected. Choices had been made inside processes that allowed extra time for evaluate and reconsideration.

However as decisioning turns into embedded in real-time programs, outcomes don’t wait, and a single determination at onboarding can affect danger scoring, buyer segmentation, and future approvals inside seconds. What was as soon as a single second is now a hyperlink in a steady chain. From there, results accumulate, shaping what programs study, what clients expertise, and the way organizations are evaluated when examined later.

This adjustments the character of decisioning itself as a result of it’s not simply an consequence however a place that will finally must be defended.

Accountability Strikes Upstream

Traditionally, accountability was one thing utilized retroactively.

You measured outcomes, monitored efficiency, investigated anomalies. And when one thing went fallacious, you hint it again to grasp what occurred.

However this mannequin breaks when outcomes are made immediately and with out time for pause, particularly as AI-driven choices execute with out human intervention.

By the point a choice is reviewed, it’s already propagated. It has:

- influenced downstream approval and denial logic

- up to date fraud scoring and classification layers

- modified how the identification is dealt with throughout workflows

- fed into coaching information and mannequin suggestions loops

If the reasoning behind it’s unclear, the issue stops being remoted and as an alternative turns into systemic.

This is the reason accountability is shifting. Experian’s 2026 outlook explains what’s driving it; organizations are anticipated to display management over how choices are made, not simply measure their outcomes. Meaning choices can’t exist as remoted outputs. They must be traceable again to the information that knowledgeable them, aligned throughout capabilities, and constant.

This adjustments the place the burden sits.

It’s not sufficient for fashions to supply performable outcomes. The info feeding these fashions should be structured, linked, and dependable sufficient to assist these outcomes after they’re questioned by regulators, inside stakeholders, or clients themselves.

Which is why this expectation doesn’t begin on the mannequin layer.

It begins at identification.

Identification Turns into Proof

When a choice is questioned, the mannequin itself doesn’t give an answer.

The reason comes from the indicators behind it.

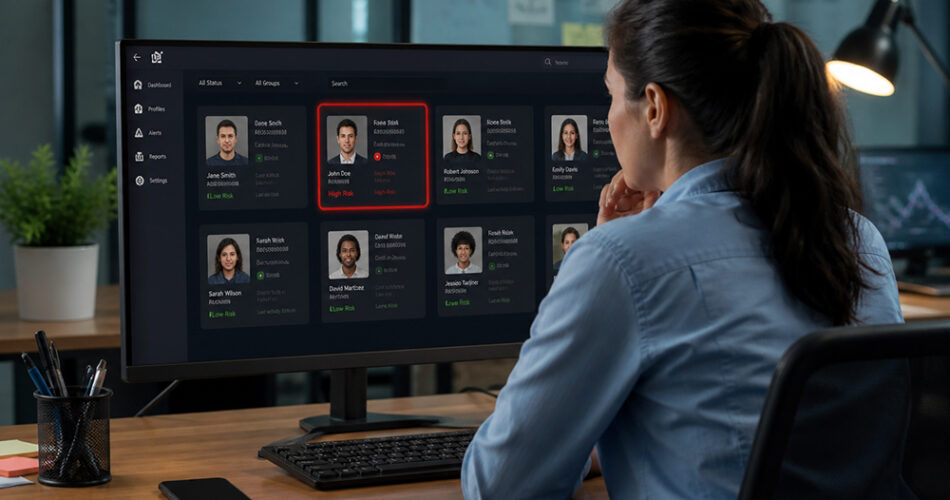

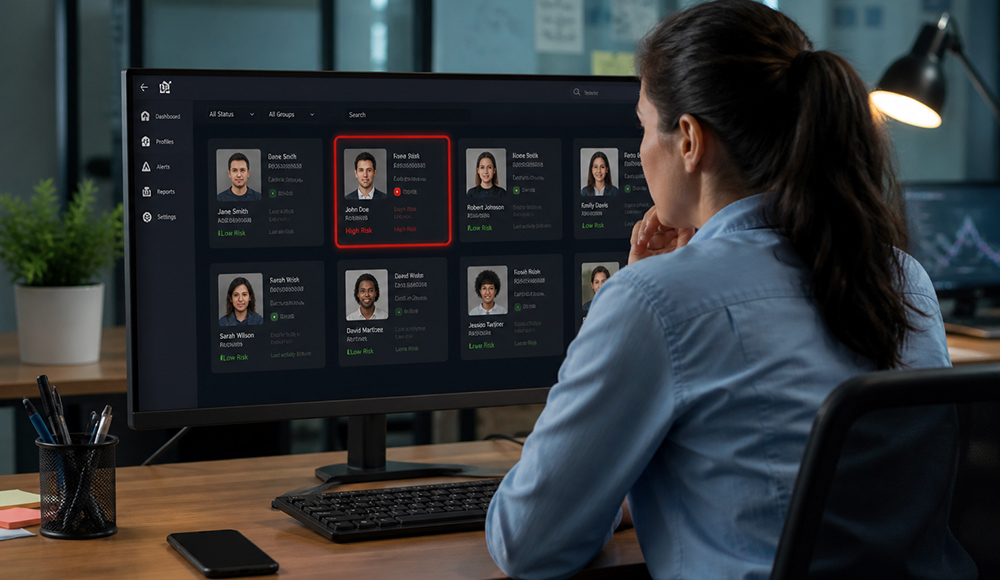

What was identified concerning the identification on the time? What habits supported the classification? What historic context made the choice cheap? These components decide whether or not a choice might be justified when it’s reviewed by regulators, auditors, or inside stakeholders.

Most identification programs had been initially constructed to substantiate existence, join data, enhance match charges and lengthen attain. They’re efficient at figuring out whether or not one thing seems legitimate in a second. What they typically lack, nevertheless, is the power to indicate whether or not an identification behaves like an actual, persistent particular person.

With out continuity, choices are tougher to defend, not essentially as a result of they’re fallacious, however as a result of they’re incomplete. They depend on indicators capturing a snapshot slightly than a sample, a second slightly than a trajectory.

Consequently, identification extends past recognition to supporting how choices are justified.

What Defensibility Adjustments

Identification methods have been historically oriented round growth, presuming a broader view would result in higher outcomes. And in lots of circumstances, it did, notably the place the aim was activation or viewers development.

However defensibility requires one thing totally different than growth.

It requires consistency between what an identification has achieved, what it’s doing now, and what the system believes about it. With out this alignment, an identification might seem respectable in a single context and questionable in one other, not as a result of the person modified, however as a result of the system lacks a unified view.

As decisioning turns into autonomous, danger shifts from outcomes to explainability:

- choices that may’t be reproduced or traced

- inputs missing clear lineage or information provenance

- outputs inconsistent throughout fashions or decisioning layers

Although these gaps would possibly now present up straight away, they accumulate and finally start to erode confidence. Extra attain will increase visibility, not conviction, and when choices are questioned, conviction is what holds.

Transferring from Identification as Enter to Identification as Basis

Identification has been repositioned from supporting choices to figuring out whether or not they are often trusted and defended. This adjustments what organizations require from it.

Connecting identifiers or validating attributes in isolation isn’t sufficient; identification wants to indicate how an actual particular person behaves throughout interactions, channels, and time. Likewise, programs should assess not simply whether or not one thing seems legitimate in a singular second, however whether or not it aligns with established behavioral patterns.

So, how do you make choices defensible? By counting on indicators with reminiscence: habits, historical past, and recurrence. With out context, ambiguity creeps in, and in environments the place choices should be defined, ambiguity carries danger.

The Experian and AtData acquisition is a direct response to the necessity for defensible identification. It goes past increasing protection by strengthening the identification layer with behavioral depth. Alerts as soon as handled as static or point-in-time are prolonged with exercise and historical past, permitting for a greater understanding of the underlying identification.

With this mannequin, e mail acts as a secure reference level, carrying historical past and exercise throughout programs to attach habits slightly than seize a single second. Paired with behavioral intelligence, identification goes past file matching and surfaces patterns throughout interactions, so choices naturally evolve to rely much less on remoted indicators. Continuity turns into the idea for confidence and, in the end, defensibility.

As organizations place extra accountability on automated decisioning, the worth of identification must be measured otherwise. Not by how a lot it could join. However by how nicely it could assist the choices constructed on high of it.

As a result of if each determination might be questioned, identification is not only a gateway right into a course of.

It’s the reason behind it.

Source link