LiveRamp right this moment introduced native help for NVIDIA AI infrastructure inside its clear rooms, upgrading the underlying compute structure to deal with essentially the most demanding AI mannequin coaching and inference workloads at as much as 15 instances the velocity of CPU-based environments. The transfer addresses a structural limitation that has constrained how AI firms work with clear room knowledge – and it repositions LiveRamp as one thing nearer to a compute platform than a pure knowledge collaboration layer.

The San Francisco-based firm, listed on the New York Inventory Trade beneath the ticker RAMP, serves a community of greater than 900 manufacturers, publishers, and platforms. Till right this moment, its clear room environments ran on CPU-based compute. That created a quiet however persistent downside: AI companions desirous to carry their fashions into LiveRamp’s safe environments had been compelled to rearchitect these fashions from GPU-native codecs to run on CPU infrastructure – a course of that may take in weeks of engineering work, introduce efficiency regressions, and add validation overhead earlier than a single byte of name knowledge is touched.

What the GPU improve adjustments

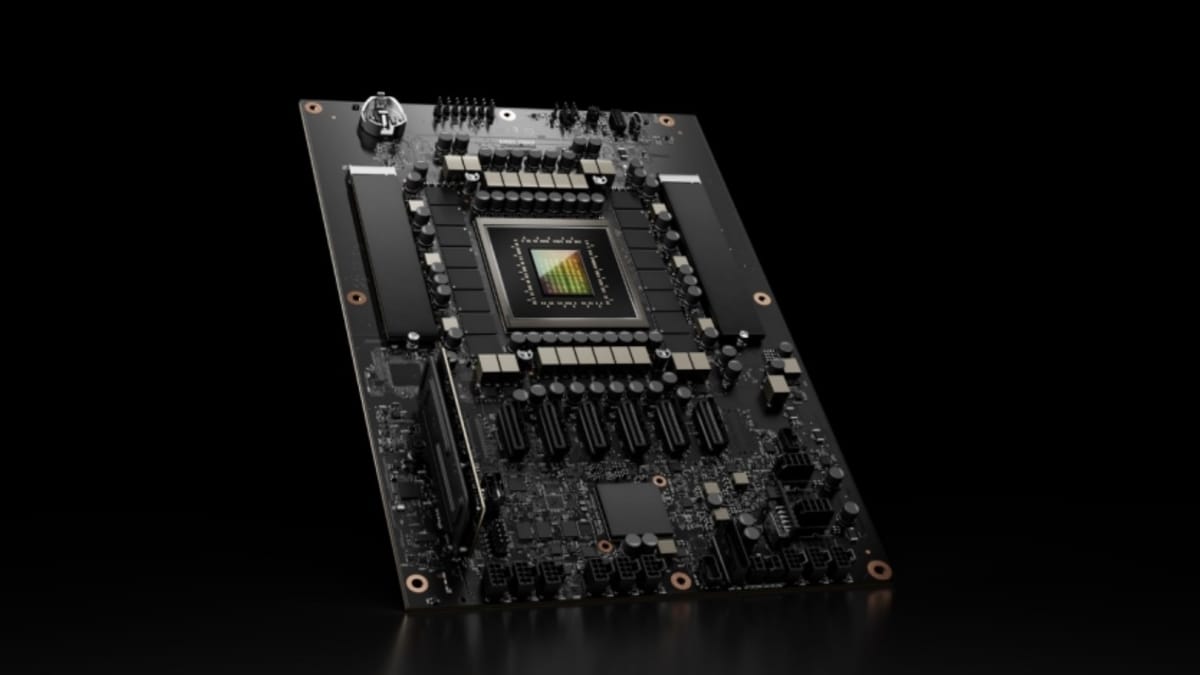

The technical shift is direct. LiveRamp has changed the CPU-based compute layer inside its clear rooms with GPU-optimized infrastructure offered by NVIDIA. AI companions can now carry current code into the clear room atmosphere with out modification. In line with the announcement, the plug-and-play mannequin means no advanced or time-consuming recoding is required to attach heavy-duty fashions to the platform.

Preliminary checks present mannequin efficiency as much as 15 instances quicker than the prior CPU-based environments. LiveRamp didn’t publish particular benchmark circumstances or element which mannequin architectures had been examined, however framed the determine as consultant of efficiency optimization timelines for entrepreneurs. The 15x quantity applies to each coaching – the method of constructing and refining a mannequin on knowledge – and inference, the method of operating a skilled mannequin to generate predictions. Each are computationally intensive operations that GPUs deal with with considerably larger throughput than general-purpose processors.

Matt Karasick, Chief Product Officer at LiveRamp, put the improve by way of what it unlocks for the platform’s community. “Whether or not an information scientist is coaching a predictive mannequin on billions of rows of transactions, or a marketer wants a brand new mannequin to enhance measurement,” he stated, “LiveRamp empowers purchasers to coach and run their most superior fashions at scale, with out ever compromising knowledge safety or proprietary IP.” The size reference issues: billions of rows of transaction knowledge isn’t a workload CPU environments deal with gracefully. GPU parallelism is what makes that type of computation viable inside a ruled clear room quite than an open cloud atmosphere.

NVIDIA framed the identical growth from the compute aspect. Jamie Allan, Director of AdTech & Digital Advertising and marketing Industries at NVIDIA, described GPUs because the foundational layer for the subsequent era of promoting expertise. “GPUs are the engine of the subsequent era AI advertising and marketing tech stack, purpose-built for essentially the most demanding coaching and inference workloads,” he stated. “Extending the facility of NVIDIA’s accelerated computing by way of LiveRamp offers entrepreneurs a frictionless basis to scale their advertising and marketing and remodel the velocity of innovation.” The framing positions this not as a function add however as an architectural shift – the type of compute that beforehand required a separate cloud atmosphere is now embedded contained in the clear room itself.

Clear rooms, mannequin weights, and knowledge safety

What makes the GPU integration structurally vital is exactly the place it sits: inside LiveRamp’s clear room structure, not adjoining to it. Clear rooms are safe knowledge collaboration environments the place a number of events can analyze mixed datasets with none single social gathering accessing the uncooked knowledge of one other. LiveRamp’s infrastructure makes use of its RampID pseudonymized identifier system to match knowledge throughout advertisers, publishers, and knowledge companions whereas stopping individual-level publicity.

Including GPU compute inside that perimeter means computationally intensive fashions can practice on model first-party knowledge with out the mannequin weights or the underlying knowledge leaving the safe atmosphere. It is a significant safety for AI firms whose fashions characterize substantial proprietary funding. An organization that has skilled a classy end result prediction mannequin on years of marketing campaign knowledge can not hand that mannequin’s weights to a model with out exposing its mental property. Clear room compute solves that: the mannequin runs contained in the safe atmosphere, the model’s knowledge by no means leaves, and neither do the mannequin weights.

The information motion query is equally vital from the model’s perspective. Beforehand, a model wanting to coach a mannequin on its first-party knowledge utilizing GPU infrastructure would sometimes have to export permissioned or aggregated datasets to an exterior cloud GPU atmosphere. Every knowledge motion step introduces latency, governance complexity, and floor space for potential publicity. Operating the identical workload inside LiveRamp’s clear room eliminates that motion completely.

Proprietary fashions may be run contained in the clear room to supply marketing campaign optimization, viewers choice, and efficiency prediction providers to manufacturers, in line with the announcement – with out uncooked knowledge ever leaving the safe atmosphere. That functionality serves a selected market section: AI firms that need to supply model-as-a-service to manufacturers however can not afford to reveal their code or weights within the course of.

The inPowered AI perspective

inPowered AI, a sell-side AI firm constructing outcome-driven fashions for programmatic promoting, participated as an early associate within the integration. Pirouz Nilforoush, President and Co-Founder at inPowered AI, defined the issue the GPU infrastructure solves for firms in that place. “Coaching and scaling outcome-driven fashions on the sell-side requires each huge compute and a safe knowledge basis,” he stated. The mixture of these two necessities – critical compute and safe knowledge entry – has traditionally compelled a tradeoff: firms both accepted the efficiency limitations of CPU-based clear rooms or moved knowledge exterior safe environments to entry GPU infrastructure.

The GPU-enabled clear rooms resolve that tradeoff immediately. “LiveRamp’s GPU-enabled clear rooms give us the power to maneuver quicker and function at larger scale,” Nilforoush stated, “unlocking extra exact decisioning throughout the open net for manufacturers.” For a sell-side AI firm, extra exact decisioning interprets into higher marketing campaign efficiency outcomes, which in flip justifies the industrial mannequin of providing AI optimization as a service. The clear room removes the info publicity danger that may in any other case forestall manufacturers from permitting a third-party AI mannequin to coach on their first-party buyer information.

inPowered AI’s involvement as a launch associate additionally alerts the meant buyer profile. The GPU integration isn’t aimed solely at massive manufacturers with inner knowledge science groups. It targets the ecosystem of AI firms that need to distribute their fashions by way of LiveRamp’s community of 900+ companions – accessing model knowledge at scale with out the authorized and technical friction of bilateral data-sharing agreements.

Availability and go-to-market

The combination is in restricted launch as of right this moment. Basic availability is anticipated later in 2026. LiveRamp is directing events to [email protected] for early entry.

That staged rollout is in keeping with how LiveRamp has launched different vital infrastructure adjustments over the previous eighteen months. The corporate launched agentic AI orchestration in October 2025, enabling autonomous AI brokers to entry id decision, segmentation, activation, measurement, and clear room capabilities – positioning itself as the primary knowledge collaboration platform to present AI brokers ruled entry to its full advertising and marketing toolset. It then expanded its Data Marketplace in January 2026 to incorporate licensing knowledge for AI coaching, accessing pre-built AI fashions, and deploying AI-powered purposes by way of ruled infrastructure. In March 2026, live AI agents from Newton Research and SemantIQ were deployed for cross-media measurement and healthcare viewers constructing.

The GPU improve doesn’t stand alone in that sequence. It gives the uncooked compute capability that makes the brokers, fashions, and market infrastructure helpful at manufacturing scale.

Context: the hole between clear rooms and GPU compute

Clear rooms had been designed for analytics workloads: SQL queries throughout matched datasets, attribution calculations, viewers overlap measurements. They weren’t constructed for the iterative, matrix-heavy operations that neural community coaching calls for. In consequence, firms wanting to make use of clear room knowledge for AI mannequin coaching sometimes confronted a two-environment downside – run privacy-preserving knowledge preparation contained in the clear room, then export to a separate GPU cluster for coaching, then reimport outcomes. Every boundary crossing between environments is a governance occasion and a possible publicity level.

LiveRamp’s clear room expertise has expanded considerably for the reason that January 2024 acquisition of Habu, a $200 million deal that introduced cross-cloud clear room capabilities throughout clouds, warehouses, and walled gardens. In October 2025, LiveRamp enabled retail media networks to measure Meta campaign performance by way of its Clear Room, demonstrating measurement use instances past conventional viewers activation. In December 2025, Uber launched its Intelligence insights platform by way of LiveRamp’s infrastructure – a major validation of the platform as a impartial knowledge collaboration layer for firms with massive shopper knowledge holdings. And this month, DIRECTV Advertising became the first multichannel video programming distributor to integrate with LiveRamp’s CAPI Hub, enabling real-time conversion alerts throughout premium CTV stock forward of the 2026-27 Upfront season.

Every of these milestones expanded what LiveRamp’s clear room can do with knowledge. The GPU integration expands what it might probably do with compute. The mixture – ruled knowledge entry plus GPU-class processing energy – is what makes coaching subtle AI fashions inside a clear room viable.

What it means for AI companions and entrepreneurs

For AI firms constructing promoting fashions, the GPU integration opens a path to market that didn’t beforehand exist at this scale. Operating proprietary fashions inside LiveRamp’s safe atmosphere means accessing model first-party knowledge with out the authorized scaffolding of a full data-sharing settlement, with out exposing mannequin weights, and with out managing a separate GPU cluster. The clear room handles governance. NVIDIA’s infrastructure handles compute. LiveRamp’s id layer – RampID, connecting over 900 companions – gives the info breadth that offers these fashions significant sign density.

The sign density query is price dwelling on. A predictive mannequin skilled completely on a single model’s first-party buyer information is bounded by that model’s knowledge. A mannequin skilled inside a clear room, the place that first-party knowledge is matched and enriched in opposition to broader alerts from LiveRamp’s associate community, has entry to a considerably bigger and extra various coaching corpus. GPU compute is what makes coaching on that bigger dataset possible inside the similar safe atmosphere, quite than requiring a separate infrastructure association.

For entrepreneurs with out inner knowledge science groups, the improve has a unique implication. Quite than constructing fashions from scratch, they’ll collaborate with AI mannequin suppliers immediately by way of the Market or clear room atmosphere, accessing superior optimization capabilities with out surrendering management over their first-party knowledge. The announcement describes this as overcoming knowledge science useful resource limitations by way of seamless entry to superior AI fashions – a positioning that targets nearly all of advertising and marketing organizations that can’t employees and preserve GPU infrastructure themselves. Karasick’s level about making “the highest-performance computing and collaboration straightforward and accessible” to the community’s 900+ members is the place the industrial logic lands: the GPU improve isn’t just a technical improve for knowledge scientists, it’s a functionality shift for practitioners who’ve by no means written a line of mannequin coaching code.

Business backdrop

The GPU integration arrives because the promoting expertise trade is actively constructing AI-native infrastructure at each layer. As ppc.land has tracked throughout its protection of LiveRamp’s current product cadence, the corporate has moved intentionally from knowledge matching towards a full-stack AI platform. The partnership with Akkio announced in April 2026integrates conversational AI into measurement experiences, permitting non-technical customers to interrogate knowledge by way of pure language. The IAB Tech Lab’s AAMP initiative, formally named in February 2026, is constructing requirements infrastructure for the agentic promoting layer that sits above all of this – the autonomous methods that may finally devour mannequin outputs to make real-time selections at scale.

These agentic methods require compute. The quicker and extra succesful the underlying fashions, the extra exactly these brokers can optimize. GPU compute inside clear rooms is, in that context, infrastructure for the agentic promoting stack – not a standalone product function however a foundational layer that makes all the pieces operating on prime of it extra succesful. Allan’s characterization at NVIDIA – that GPUs are “purpose-built for essentially the most demanding coaching and inference workloads” – factors at why the clear room compute improve issues past its quick use instances. The following era of agentic promoting is determined by fashions which might be quick, incessantly retrained, and working on wealthy first-party knowledge. LiveRamp is constructing the infrastructure that makes all three circumstances achievable inside a single ruled atmosphere.

Timeline

- January 2024 – LiveRamp acquires Habu, an information clear room software program supplier, in a money and inventory transaction valued at roughly $200 million, gaining cross-cloud clear room capabilities

- August 6, 2025 – LiveRamp experiences Q1 fiscal 2026 income of $194.8 million, representing 10.7% year-over-year development, with Knowledge Market producing $35 million

- October 1, 2025 – LiveRamp launches agentic AI orchestration, AI-powered segmentation, and AI-powered Market search, positioning itself as the primary knowledge collaboration platform to present AI brokers ruled entry to its full advertising and marketing toolset

- October 23, 2025 – LiveRamp enables retail media networks to measure Meta campaign performance by way of its Clear Room platform

- November 3, 2025 – LiveRamp donates the User Context Protocol to IAB Tech Lab to ascertain an open customary for AI agent sign trade in promoting

- December 8, 2025 – Uber launches Intelligence insights platform powered by LiveRamp’s clear room infrastructure

- January 6, 2026 – LiveRamp expands its Data Marketplace to incorporate knowledge and fashions for AI coaching, in addition to ruled entry to associate AI-powered purposes and brokers

- February 26, 2026 – IAB Tech Lab formally names its agentic advertising initiative AAMP, consolidating seven protocol elements together with the Consumer Context Protocol donated by LiveRamp

- March 3, 2026 – LiveRamp deploys live AI agents from Newton Analysis and SemantIQ for cross-media measurement and healthcare supplier viewers constructing

- April 7, 2026 – LiveRamp and Akkio announce a strategic partnership integrating Akkio’s conversational AI engine into LiveRamp’s measurement experiences

- April 16, 2026 – DIRECTV Advertising becomes the first MVPD to integrate with LiveRamp’s CAPI Hub, enabling real-time conversion alerts for CTV stock

- April 27, 2026 – LiveRamp pronounces native help for NVIDIA AI infrastructure inside its clear rooms, with preliminary checks displaying as much as 15x quicker mannequin efficiency in comparison with CPU-based environments; integration at present in restricted launch with GA anticipated later in 2026

Abstract

Who: LiveRamp (NYSE: RAMP), headquartered in San Francisco, California, in partnership with NVIDIA. Key voices within the announcement embody Matt Karasick, Chief Product Officer at LiveRamp; Jamie Allan, Director of AdTech & Digital Advertising and marketing Industries at NVIDIA; and Pirouz Nilforoush, President and Co-Founder at inPowered AI.

What: LiveRamp introduced native help for NVIDIA AI infrastructure, upgrading the compute layer inside its clear room structure from CPU to GPU-optimized infrastructure. The improve permits AI companions to run current fashions inside LiveRamp clear rooms with out rearchitecting them, and allows manufacturers to coach and deploy subtle AI fashions on first-party knowledge at as much as 15 instances the velocity of prior CPU-based environments, with out exposing knowledge or mannequin weights.

When: Introduced April 27, 2026. The combination is at present in restricted launch, with normal availability anticipated later in 2026.

The place: LiveRamp is headquartered in San Francisco, California, with places of work worldwide. The combination is on the market by way of LiveRamp’s clear rooms and through the LiveRamp Market.

Why: CPU-based clear room infrastructure created a technical barrier for AI companions, who had been compelled to rearchitect GPU-native fashions earlier than bringing them into safe environments. The GPU improve removes that barrier, enabling plug-and-play mannequin deployment inside clear rooms. For manufacturers, it means quicker AI mannequin coaching and inference on first-party knowledge with out compromising knowledge governance or mental property. The combination extends LiveRamp’s technique of constructing a full AI infrastructure layer on prime of its knowledge collaboration community, including compute capability to enhance its current id decision, agentic AI, and market capabilities.

Share this text