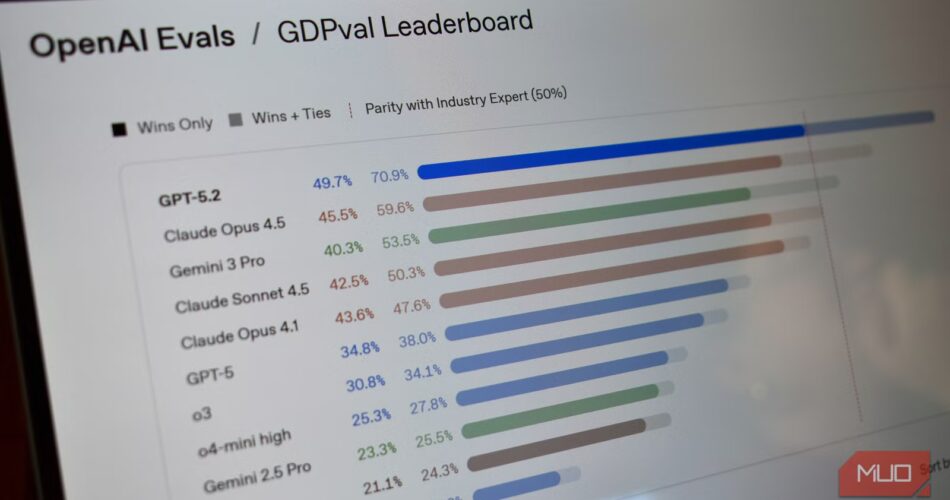

Each time a brand new AI mannequin launches, the cacophony of AI benchmarking websites whirs into life and bombards us with colourful charts, imperceptible and marginal enhancements to uncontextualized numbers that basically imply nothing to most individuals.

More often than not, in the event you’re not an AI researcher, most of those figures and charts imply nothing. I imply, positive, “numbers go up = AI will get higher” is a fundamental stage of understanding, however these numbers usually do not reveal the data pertinent to how most folk use AI.

In that, the issue is not that benchmarks are ineffective. It is that they are catering to the fallacious viewers, functioning extra like advertising and marketing than explaining clearly what’s new, what works, and the way it’ll prevent time.

Why AI corporations love benchmark charts

And why that is what causes all the issues

The reasoning behind AI benchmarking, like all benchmarking exams, is sound. They assist to simplify advanced programs into easy-to-understand numbers. As an alternative of describing delicate enhancements in reasoning or language understanding, corporations can level to a chart and say their mannequin scored 92% on one check whereas a competitor scored 88%.

Comparisons really feel goal, and benchmarks present a standardized strategy to managing efficiency and datasets in managed environments. If each lab evaluates its fashions utilizing the identical check, it turns into simpler to trace progress and measure enhancements throughout totally different approaches.

The issue is that the second these benchmarks depart the lab and hit the streets, the context behind them is often meaningless. One mannequin beating one other on a reasoning benchmark does not essentially imply it will likely be higher at on a regular basis duties like summarizing paperwork, modifying writing, or answering sophisticated questions.

For most folk, these skills matter excess of efficiency on fastidiously structured datasets in ultra-controlled lab environments.

What AI benchmarks truly check

Additional muddying the AI benchmarking water is the sheer variety of exams from each the AI builders and exterior testers. However the simplest way to determine real-world usefulness is to examine what they’re measuring.

Because the testing is standardized, there are a number of AI benchmarking exams used throughout the board.

- MMLU: The Large Multitask Language Understanding benchmark evaluates fashions utilizing 1000’s of multiple-choice questions throughout dozens of educational topics, together with physics, regulation, economics, biology, and medication.

- GSM8K: The Grade Faculty Math 8K measures mathematical reasoning, with the dataset containing 1000’s of grade-school-level math phrase issues that require a number of steps to unravel.

- HumanEval: The HumanEval benchmark exams fashions utilizing coding prompts and evaluates whether or not the AI generates an accurate resolution that passes a collection of exams. This makes it extraordinarily priceless for evaluating fashions meant to help programmers.

On paper, it is all helpful. However in actuality, the real-world translation is not seamless. For instance, whereas the MMLU sounds spectacular, it is mainly answering an enormous record of exam-style questions with predefined solutions. However most folk aren’t utilizing AI to take an examination — they’re deciphering directions and fixing issues. Moreover, MMLU has excessive error charges and a big Western bias.

Equally, GSM8K is a helpful indicator of logical reasoning, however most individuals aren’t utilizing an AI chatbot to unravel elementary arithmetic puzzles. They’re asking them to clarify ideas, summarize data, draft content material, or help with analysis, but GSM8K scores routinely seem in advertising and marketing supplies as proof of normal intelligence.

Benchmark contamination is a big downside

The AI fashions have already seen the solutions throughout coaching

There’s one other large downside with AI benchmarking: dataset contamination.

Most AI fashions are skilled utilizing monumental collections of textual content and different data scraped from the web. Which means the datasets embody analysis papers, textbooks, on-line code repositories, and lots of publicly out there benchmark datasets.

When benchmark questions seem in coaching knowledge, fashions can successfully memorize the solutions.

Researchers discuss with this subject as contamination, and it could actually considerably distort benchmark outcomes. A mannequin may seem to carry out effectively on a check not as a result of it has discovered to purpose via the issue, however as a result of it has seen the query earlier than throughout coaching.

A analysis paper titled A Cautious Examination of Giant Language Mannequin Efficiency on Grade Faculty Arithmetic (ArXiv) explores this in additional element, testing AI fashions on GSM1K, an AI benchmark much like GSM8K that the researchers can guarantee hasn’t beforehand been seen.

It discovered that sure fashions, reminiscent of Phi, Mistral, and Llama, have been “exhibiting proof of systematic overfitting throughout virtually all mannequin sizes” with accuracy dropping “as much as 13%” when tried on an identical however untested benchmark.

Additional evaluation suggests a optimistic relationship (Spearman’s r2=0.32) between a mannequin’s likelihood of producing an instance from GSM8k and its efficiency hole between GSM8k and GSM1k, suggesting that many fashions could have partially memorized GSM8k.

So whereas benchmarks can present efficiency at a look, there’s a likelihood the AI mannequin’s efficiency is boosted by its present information of the questions and solutions. That is why the analysis is so necessary for accuracy, and why AI benchmarks aren’t at all times what they appear.

The AI benchmarks you must truly care about

They don’t seem to be all pointless

Benchmarks aren’t pointless. Having a solution to make advanced datasets straightforward to know is not any unhealthy factor — that is not what I am arguing right here. It is simply that different benchmarks and analyses make extra sense for normal of us.

Some use the collective expertise of AI chatbot customers, whereas others are extra centered on the day-to-day points that we face, reminiscent of hallucinations.

1. Human desire testing

Some of the extensively used alternate options to common AI benchmarks are human-preference testing websites that evaluate blind human evaluations.

Websites like Hugging Face’s Leaderboard Overview, OpenLM’s Chatbot Arena, and ArenaAI’s Battle Mode offer you a a lot stronger likelihood of determining the actual human worth of AI.

Normally, you submit a immediate, two AI fashions generate responses, after which everybody votes on the responses. As a result of the fashions are anonymized, voters don’t know which system produced which reply. That reduces model bias and focuses the analysis on precise output high quality.

Over time, the system collects a whole bunch of 1000’s of votes and produces a rating based mostly on actual consumer preferences.

This strategy captures what conventional benchmarks usually miss, reminiscent of readability, the usefulness of responses, instruction-following, conversational tone, and extra.

In different phrases, it evaluates the expertise of utilizing the mannequin, not simply its capacity to go tutorial exams.

2. Instruction-following benchmarks (IFEval)

One other various AI comparability analysis is IFEva, an AI analysis device developed by of us at Google, however additionally it is not formally supported by them.

As an alternative of testing information or reasoning, IFEval measures one thing a lot easier: does the mannequin truly comply with directions?

For instance, prompts may embody measurements reminiscent of answering straight in 5 factors, writing a solution in JSON, avoiding particular phrases or characters, limiting response size or characters, and so forth.

Exams of this nature are necessary as a result of they’re the sorts of directions folks give AI chatbots every single day. The benchmark then checks whether or not the mannequin hit these ranges.

This may sound fundamental, however instruction-following reliability is likely one of the most necessary elements in real-world AI workflows.

3. Actual-world activity benchmarks (HELM)

One other effort to guage AI fashions extra realistically is the Holistic Evaluation of Language Models (HELM) framework developed by researchers on the Stanford Center for Research on Foundation Models.

HELM is absolutely helpful as a result of as an alternative of specializing in a benchmark on a single rating in managed lab environments, it evaluates fashions throughout a number of real-world eventualities, together with:

- Summarization duties

- Query answering

- Data extraction

- Toxicity and bias

- Robustness to immediate adjustments

HELM additionally measures extra properties past accuracy, reminiscent of:

- Calibration (confidence vs. correctness)

- Equity

- Effectivity

- Robustness

The concept is that evaluating a language mannequin requires a number of dimensions, not only a single leaderboard rating.

4. TruthfulQA

Lastly, one of many greatest issues with generative AI is hallucinations, the place the mannequin primarily lies and delivers false, deceptive, or utterly fabricated responses.

As you’d count on, determining if the device you are utilizing is pulling garbage out of the air is necessary, which is why the TruthfulQA benchmark exams questions that ceaselessly set off widespread misconceptions or false solutions. The benchmark checks whether or not the mannequin repeats these misconceptions or appropriately avoids them, utilizing 817 questions spanning 38 classes overlaying myths, conspiracies, misinformation, trick questions, and extra.

TruthfulQA is definitely one of the crucial well-liked AI hallucination benchmark instruments, with over 5,000 Google Scholar citations, and the primary metric it measures is truthfulness: does the mannequin produce a factually appropriate reply, or does it confidently generate one thing false?

Benchmarks are helpful, however they do not inform the total story

Misunderstood, or simply misused?

The choice choices above spotlight that benchmarks are nonetheless supremely helpful for understanding AI efficiency. I am not arguing that they should not be used, simply that more often than not, they’re misused and current data that does not painting how helpful an AI device is, or, as per the ultimate set of exams, how correct it’s.

I am additionally painfully conscious that the reply to avoiding benchmarking should not essentially be to make use of extra particular benchmarks. The best absolute various is to make use of a particular immediate that you just’re acquainted with and may choose the output of throughout totally different instruments. For instance, MakeUseOf Segment Lead Amir Bohlooli pushes AI tools to create a simulation and judges the output. You can even use a number of the tried and tested riddles and probability puzzle prompts to see how an AI mannequin responds, or use a series of prompts designed for specific model types.

In all circumstances, you are judging the output by yourself metrics and the way it fits your necessities relatively than counting on exterior benchmarking to inform you what works. In that, combining the outputs of your prompts with extra human-centric benchmarking instruments, reminiscent of Chatbot Area.

So, the following time you see a brand new AI mannequin that is 13.7 p.c higher on MMLU, you possibly can ask your self the query: Does that truly make the AI mannequin higher, or is it simply one other managed benchmark experiment designed to make it look good?

Source link