Right here is how experimentation used to work. You had an concept. It went to a developer. The developer had different priorities. Your concept waited.

By the point it shipped, weeks had handed. Generally extra. After which, evaluation waited on the one one that understood the information. Variations sat in a backlog behind three different releases. Outcomes took so lengthy to interpret that the following check was already overdue earlier than anybody had acted on the final one.

AI doesn’t change what good experimentation appears like. It removes what was getting in the way in which.

How does AI makes it simpler to run extra high quality experiments?

58.74% of all Optimizely Opal agent utilization is experimentation.

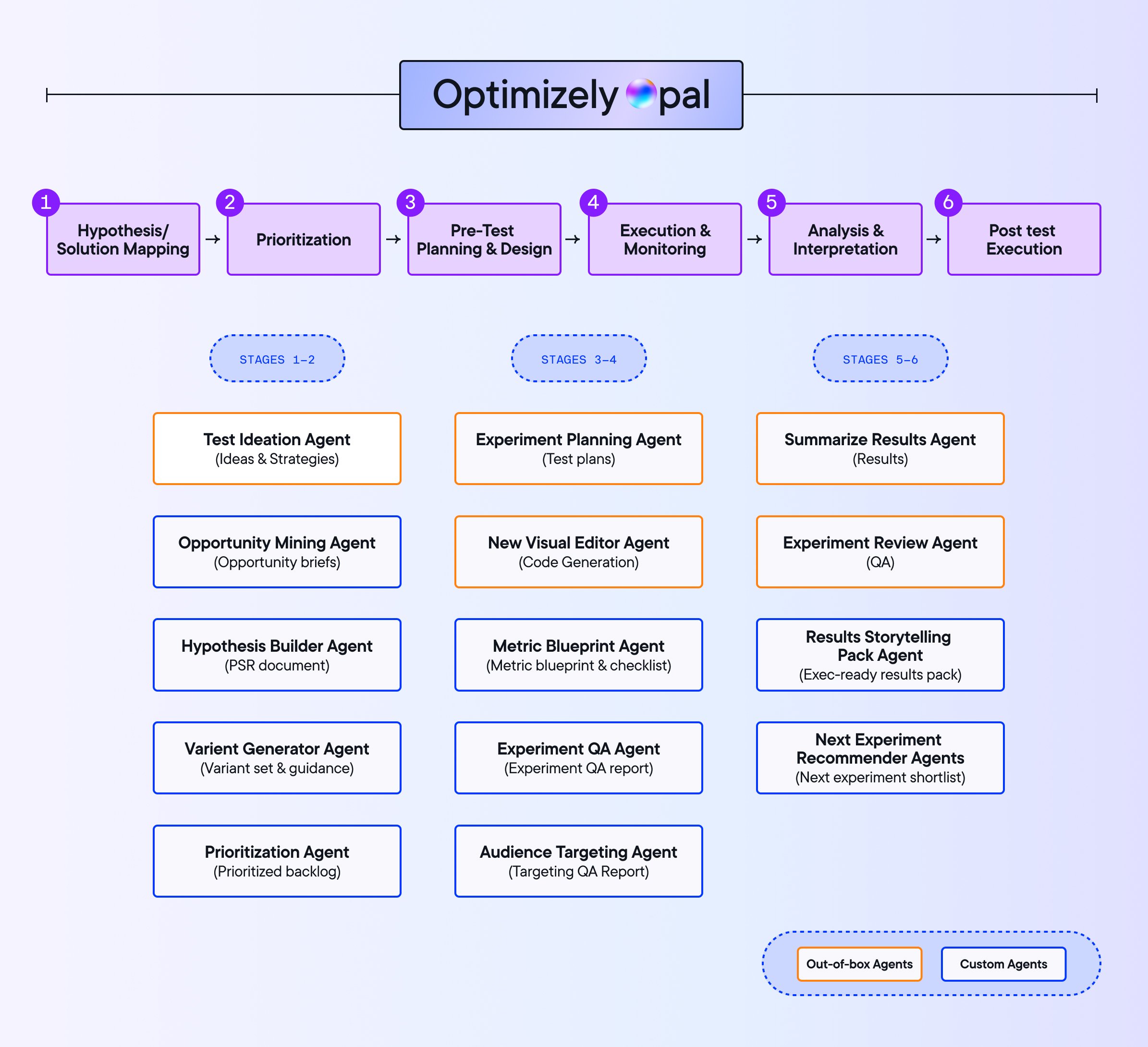

AI workflow brokers now deal with the work that used to contain ready at each stage of the experimentation cycle.

Concepts get generated sooner and grounded in what has truly labored earlier than.

Check plans get structured in seconds, with the precise metrics from the beginning. Variations get constructed with out touching the dev queue. Outcomes get summarized earlier than the perception has an opportunity to go chilly.

The result’s a program the place every stage feeds the following, and nothing stalls ready for somebody to have time.

Nonetheless, AI implementations are struggling as a result of most AI instruments don’t have any reminiscence of your program.

So, does AI even work reliably, at scale, and with accountability and governance?

To search out a solution to this drawback, we analyzed information throughout 47,000 Optimizely Opal interactions throughout 900 firms. What we discovered was that AI’s affect is caught on the particular person stage.

Here is the total AI experimentation benchmark report.

AI implementations are failing with out context

Most AI instruments provide you with a response. When your AI has no reminiscence of your program, issues present up:

- Groups repeat checks they’ve already run as a result of nothing connects previous learnings to new concepts.

- It will get more durable to step again and perceive how this system is definitely performing as a result of there is no such thing as a thread working via it.

- When groups do use AI for strategies, they spend extra time modifying outputs to suit their context than they save producing them.

When AI understands your present experiments, metrics, function flags, and program historical past, concepts cease repeating work you could have already accomplished, check plans replicate what your program has truly realized, and when a check concludes, the following step builds on what you now know somewhat than beginning over.

Experimentation ROI will not be in additional concepts. It’s within the related ones. Not in additional checks, however a program the place each studying compounds.

Optimizely Opal understands your full experimentation program. It has:

- Program-level reporting: Which experiments launched or concluded in a given timeframe, which carried out finest, and the way win charges are trending.

- Ideation based mostly on previous outcomes: What to check subsequent, drawn out of your experiment historical past somewhat than generic strategies.

- Personalization marketing campaign questions: Marketing campaign setup, which campaigns have been created, and when. This is applicable to standalone Personalization clients too.

Workflow brokers execute throughout the total lifecycle and carry what got here earlier than into each step that follows.

Scale your experimentation program with AI brokers Register now AI brokers throughout the experimentation workflow

Groups utilizing brokers throughout the total experimentation lifecycle are running 78.7% more experiments, launching 24.1% extra personalization campaigns, and seeing win charges carry by 9.3%. Extra checks are reaching conclusions, too, not simply getting began.

Experimentation is the place the operational drag is highest and the place workflow brokers are creating essentially the most worth.

Picture supply: Optimizely

Optimizely Opal brokers cowl the total experimentation lifecycle from ideation via post-test execution. Out-of-the-box brokers deal with the commonest levels. Groups may also construct customized brokers for workflows particular to their program.

1. Experiment ideation agent

Run extra checks with out including headcount.

The Ideation Agent attracts on patterns out of your previous learnings. Level it at a URL, share your objectives, and it generates concepts. As a result of it is aware of what you could have already examined, it’s not recycling previous floor.

Groups utilizing it are seeing 18% extra checks created and 33% sooner run occasions.

2. Experiment planning agent

Speculation to launch-ready plan in seconds

The planning agent units up experiments with the precise metrics, viewers measurement, and run time from the beginning.

It flags when a selected metric will take too lengthy to achieve statistical significance and suggests options. Superior methods like CUPED are surfaced the place related.

Groups utilizing it are seeing experiments begin 19% sooner and attain statistical significance 25% sooner.

3. Variation growth agent

No queue, no dependency.

The most important bottleneck in experimentation is getting concepts constructed.

Checks that make an actual affect normally want customized code. Which means a dev ticket, a queue, a dash cycle, and a lead time simply to get prioritized.

The variation development agent lets advertising and product groups construct experiment variations themselves, contained in the Visible Editor, with out writing code.

Two examples of what that appears like:

- Including a button throughout a whole web page: You describe what you need. The agent applies a constant change throughout each product web page in seconds, no dev ticket wanted.

- Including a brand new part to a web page: Ask Optimizely Opal to introduce a price proposition block or a belief sign. It generates the part, locations it appropriately, and retains branding constant.

The agent checks for conflicts routinely, reducing QA time and decreasing failed builds.

Our analysis of 127,000 experiments discovered that groups obtain the best affect at below 10 checks per engineer. The Variation Improvement Agent is what makes that ratio sustainable as applications scale.

4. Experiment abstract agent

Factors on to the following check price working

The abstract agent evaluations your metrics when a check concludes, generates a plain-language abstract, and recommends what to do subsequent. It surfaces patterns groups would in any other case miss.

6.8% of experiments are already being summarized by brokers. 19.54% of follow-up checks are pushed by agent suggestions.

What’s our strategy to AI governance?

The questions we hear most from groups adopting Opal will not be about whether or not AI works. They’re about management.

How can we ensure AI-generated content material doesn’t go dwell with out our staff checking it first?

Who truly has entry to launch these checks?

With out solutions to these questions, issues go sideways.

Completely different groups run overlapping checks on the identical viewers with out realizing it. AI outputs ship with out anybody checking them in opposition to model requirements. Management has no visibility into what is definitely working. And when one thing goes flawed, no person is aware of the place to start out.

Optimizely Opal is constructed with governance in thoughts:

- Danger mitigation and model security: AI generates shortly. Governance ensures what goes dwell displays your requirements, not simply what the mannequin produced.

- Cross-functional alignment: Outlined roles and processes hold experiments coordinated. No two groups by chance testing the identical viewers with conflicting variants.

- Single supply of fact: How does your group outline a profitable experiment? Governance solutions that query as soon as, constantly, so applications can scale with out revisiting it each time.

- Future-proofing AI adoption: When roles like Admin, Consumer, and Agent Builder are clearly outlined and documented, the AI black field stops feeling like a black field. Management builds confidence. Adoption follows.

Nonetheless received extra questions? We received them lined.

Wrapping up

The potential of AI in experimentation is evident via accelerated workflows and extra time for strategic considering. However what excites us most at Optimizely is not simply AI help, it is the evolution towards true AI partnership.

We’re constructing an ecosystem the place AI brokers work proactively throughout your total advertising and experimentation ecosystem, from surfacing check alternatives to making sure model compliance and connecting cross-product insights.

Whether or not you are personalizing buyer experiences in retail or optimizing function rollouts in software program, AI-powered experimentation offers you the sting to guide the change.

Source link